How China Hopes to Build AGI Through Self-Improvement

Without looking like it

Today’s guest post is from Zilan Qian, a programme associate at the Oxford China Policy Lab and a Seasonal Fellow at the GovAI.

“Thank god right now the PRC……doesn’t strike me as being that AGI-pilled. But if they get AGI-pilled… Especially, you know, the later you are to a thing, the higher the cost you have to pay. Dangerous outcomes are very possible.”

— Dean W. Ball, 80,000 hours podcast, Dec 2025

“Encourage technological innovation in multimodal AI, agentic AI, embodied AI, swarm intelligence, and related fields, and explore pathways toward the development of Artificial General Intelligence (通用人工智能). Promote the parallel advancement of general-purpose large models (通用大模型) and industry-specific models, leveraging high-value application scenarios to drive model deployment and iterative improvement.”

— China’s 15th Five-Year Plan, March 2026

Many people tracking the US-China AI competition used to share a “thank god” instinct. Reading high-level AI policy or watching Chinese big tech fiercely compete for markets, they concluded that China mainly saw AI as a powerful economic engine, rather than an unprecedented, civilization-altering technology for humanity. And for many, this was a blessing: it bought time for the US to press its frontier advantage, or for AI safety to catch up with AI’s accelerating risks.

However, that reading is becoming increasingly harder to sustain. While in 2017 the term “通用人工智能” used by Beijing could safely be interpreted as general-purpose AI rather than AGI, the same cannot be asserted now that the term has resurfaced in 2026. The Five-Year Plan quote explicitly distinguishes AGI from general-purpose large models, treating them as separate tracks. What’s more, like their Silicon Valley counterparts, more and more AI scientists in China see AI self-improvement as a promising pathway to AGI.

However, Chinese scientists’ vision of AGI and self-improvement looks quite different from that of Silicon Valley. Rather than a rapid software-driven intelligence explosion — AI building AI in a recursive loop — Chinese thinking converges on something more embodied: human-level intelligence that requires physical-world interactions. In contrast to a top-down Manhattan Project, this vision of AGI appears to be a bottom-up movement driven by constraint in compute, gradually gaining influence in Beijing’s top policy circle.

The differences in perceiving AGI result in two distortions. On one hand, in the future, when Beijing decides to “race” towards AGI rather than “explore” it, it will not rush to build the software machine god that the U.S. frontier labs have in mind. On the other hand, even if Chinese labs are already doing things that Silicon Valley would recognize as precursors to AGI, they may not frame the activities as AGI, as they understand the word differently.

The American Approach to AGI

Today in the U.S., especially among the frontier AI labs, Recursive Self-Improvement (RSI)— AI being able to improve itself without human assistance — has become the dominant working theory of how AGI gets built. In January 2026, Dario Amodei described that when AI is good enough at coding and research, it would be used to produce the next generation of models, creating a self-accelerating cycle. He added that AI could do most, if not all, of what software engineers currently do within six to twelve months — at which point, he noted, progress could move faster than most expect. Similarly, OpenAI also sees RSI as a viable path towards AGI, with Sam Altman targeting fully automated AI to build the next generation of itself in 2028. While some argue that the messier, coordination-heavy aspects of AI development — such as organizational and project management — are harder to automate, there is a broad consensus among frontier lab researchers that AI agents will increasingly take over significant portions of AI R&D work. Agentic coding is widely seen as the most critical capability to be automated first — and by most accounts, the process has already begun inside leading labs.

This narrative of RSI shapes how the “racing against China” discourse is framed in SF and DC: if automating AI research is the decisive lever, then whoever initiates RSI first wins. China, on current assessments, is not close. Against that backdrop, what the broader Chinese AI ecosystem is doing seems largely irrelevant to the question that matters, whether it is investing in embodied AI, supporting open-source, or promoting AI deployment. Some argue that Chinese AI, now characterized by open-source and low-cost, only iterates rather than innovates, catching up on the commodity layer while losing the battle of the real capability. So even as China appears to lead the AI diffusion race that yields more immediate economic benefits, with the prospect of RSI, which promises rapid self-compounding gains through automated AI research, the US is still ahead, and the gap will soon increase rapidly.

This seems to be a reasonable prediction–except that not all developments in China solely focus on near-term social and economic benefits. After all, the concept of machine self-improvement leading to human-level intelligence is not uniquely American. What differs is the underlying theory of how intelligence works and what it would take to achieve it.

Embodied Closed-Loop, AGI with Chinese Characteristics

“First, you build a brain. This brain has all kinds of capabilities — language ability, image understanding, the ability to judge and recognize the physical world. Then you equip it with hands and feet so it can call upon the world model to solve problems, predict what will happen in the world, and interact with the world. The results of that interaction are fed back as a reinforcement signal. I immediately receive this signal, learn again, and modify my model. This forms a closed loop.”

— Zhang Peng (张鹏), Z.ai CEO; translated by Kyle Chan

Z.ai is far from the only voice in China discussing AGI. Western observers tend to treat DeepSeek as the lone AGI-focused lab in China, or reach a generalized argument that China is not interested in AGI. But that framing misses a growing number of important actors — from other frontier AI startups to academicians from the Chinese Academy of Science — who have named AGI as their explicit goal.

Skeptics may dismiss Zhang’s statement as business-motivated hype, given that it came from an interview just before Z.ai went for IPO, and he is far from the only one with an agenda. As in the US, Chinese AI actors speak about AGI for mixed reasons: commercial positioning, alignment with state rhetoric, or intellectual differentiation. However, the convergence of a similar architecture across company founders, academic researchers, and state-adjacent scientists suggests something more than coordinated messaging. Below, I trace how each component of Zhang’s loop recurs across Chinese AI discourse.

Step 1: Multimodality and World Models

Multimodality enables more dynamic real-world engagement by expanding the range of inputs a system can process and act on. The argument is that language alone cannot provide the perceptual grounding necessary for genuine environmental interaction. MiniMax’s CEO Yan Junjie (闫俊杰) states that AGI is inherently multimodal. In 2025, DeepSeek’s Liang Wenfeng (梁文峰) acknowledged that the lab has internally bet on three paths towards AGI, with multimodality being one besides math/coding and natural language.

But richer inputs are only part of the problem. To act intelligently in the world, many anticipate a system knowing how the world responds to its actions. Unlike the inference-time planning in reasoning models, which searches over reasoning steps in language space, world models plan in state space, simulating the physical consequences of actions before acting. One of China’s key state-affiliated AI labs, Beijing Academy of Artificial Intelligence (BAAI, 智源研究院), predicts that world models will emerge as the primary pathway to AGI in 2026. The lab argues that the industry starts to move from “predict the next word” to “predict the next state of the world,” marking AI beginning to grasp spatial-temporal continuity and causality. ByteDance identifies the world model as one pathway to AGI, viewing it as a key way to “explore the frontier of AI’s cognitive ability.”

Multimodality has become the common practice, and the U.S. labs like Google DeepMind and World Labs are also building world models. But for many Chinese researchers, these two are not standalone paths towards AGI but the brain that makes the next step possible.

Step 2: Embodied AI

If world models provide a simulated interface for environmental feedback, embodied AI, or AI-empowered robotics, provides a physical one. What makes the physical world especially appealing is the abundance of data. Although a virtual world can provide rich synthetic data, the physical world is irreducibly more complex, and interacting with it generates training signals that simulations can hardly match. Many prestigious Chinese scientists see embodied AI as crucial to achieving AGI. Turing award winner Andrew Yao (姚期智) states that the development of embodied AI is crucial for AI to acquire the capacity to comprehend the physical world. BAAI director Wang Zhongyuan (王仲远) claims that embodied AI’s interaction with humans in the real physical world is the key ability for AGI. Shanghai AI Lab director Zhou Bowen (周伯文) places embodied interaction at the final stage of AGI development, where AI can actively learn from and simulate the world through physical presence.

Among these scientists is academician Zhang Bo (张钹), the Director of the Institute for Artificial Intelligence at Tsinghua University, who pioneered embodied AI studies in China in the 1980s. He describes the road to AGI as passing through three successive stages of interaction: between language models and humans, between AI agents and the virtual world, and finally between embodied AI and the physical world. In his view, most approaches to AI have treated thinking as separable from the body and its environment, modeling reasoning or perception in isolation without connecting them to physical action. Embodied AI breaks from this by insisting that genuine intelligence only emerges when an agent can perceive the world, act upon it, and integrate the results back into its own cognition.

Some researchers push the claim further, extending the scope of what AI can potentially learn. Zhu Song-chun (朱松纯), dean of the Beijing Institute for General Artificial Intelligence, argues that natural abilities such as emotions and languages are the true embodiment of human intelligence. The institute actively works on embodied AI to facilitate learning and interaction with human societies in the physical world, allowing the AI to build intrinsic value systems from human examples.

Step 3: Closing the loop

With embodied AI, the loop can finally be closed. A unified multimodal brain perceives the world across modalities. A world model builds predictive representations of how the environment responds to actions. Embodied presence generates the physical feedback that neither language interaction nor simulation can fully replicate.

Alibaba CEO Wu Yongming (吴泳铭) argues that AI’s self-improvement loop cannot close on static data alone, which, however vast, is ultimately bounded by what humans have already expressed. As AI penetrates more physical world scenarios, it gains the opportunity to build its own training infrastructure, optimize its data pipelines, and upgrade its own model architectures. Each physical interaction becomes a fine-tuning, each feedback a parameter optimization — and through enough cycles of that loop, Wu argues, AI will iterate itself toward intelligence that surpasses its own training.

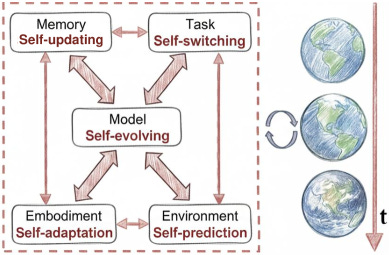

Although Wu’s vision has yet to be realized, the components of the closed-loop are being assembled at speed. Across China, a growing number of companies are racing to build what the industry calls the ‘brain’ for robots: Alibaba launched RynnBrain, Ant Group open-sourced LingBot-VLA as a ‘universal brain’ for physical AI — explicitly framing it as a step toward AGI — while startups like Spirit AI and X Square Robot are developing VLA models that learn through physical reinforcement learning rather than static data. Local governments have funded robot boot camps where hundreds of robots practice real-world tasks via human teleoperation and autonomous collection, generating the kind of physical interaction data that no static corpus can provide. Moreover, researchers from Tsinghua University envision a “self-evolving embodied AI” paradigm — unlike AI that improves by rewriting its own code, this proposed system closes the loop through its physical body, continuously updating its memory, goals, physical capabilities, and underlying model based on what it learns from acting in the real world.

Unlike the RSI discourse at the U.S. frontier lab, which increasingly coalesced around agentic coding as the primary lever, the Chinese ecosystem has no single consensus path. DeepSeek focuses on multimodality without a clear interest in embodiment. Z.ai treats coding agents as central while starting to invest in multimodality-enabled physical AI. MiniMax has long emphasized multimodal architectures. ByteDance and Tencent have invested more heavily in world models. Among leading scientists, Zhang Bo and Zhou Bowen see embodied AI as the final stage of AGI development; Ya-qing Zhang (张亚勤), the founding Dean of the Tsinghua Institute for AI Industry Research, adds a biological layer beyond that; Andrew Yao maintains that large models will remain the core foundation to support all subsequent advances, including embodied AI.

What is nonetheless striking is how rarely coding is presented as a silver bullet, and how consistently Chinese researchers reach for paradigms that go beyond language models — emphasizing the full complexity of human intelligence rather than one slice of it. Rather than a superbrain built from code as perceived by many in Silicon Valley, Chinese AI actors increasingly narrate a different endpoint of AI: something closer to building a human from the ground up. Compared with the months-long timelines offered by many U.S. AI executives, the Chinese self-improvement loop is larger, more integrated with physical reality, and far slower to close—by design.

A Bottom-Up Constraint-Driven AGI

Beijing is AGI-curious, not AGI-pilled. The embodied closed-loop approach to AGI emerging in China is not a secretive Manhattan Project but a bottom-up movement shaped by existing constraints and competitive pressures, that is gradually finding its way into the top-level vision.

Despite its aim to “explore AGI,” the top policymakers have many other near-term issues they want AI to solve. AGI does not make its way into the executive summary of the new Five-Year Plan. Poe Zhao points out that the government’s 2026 AI agenda still prioritizes “concrete deployment targets” over “general AI ambitions.” Similarly, many AI governance researchers in China still believe that DeepSeek, and maybe now Z.ai, are the only labs in China that are chasing AGI, while the rest of the companies are more practically focused on deployment. They are less concerned with replicating human intelligence and more focused on addressing the immediate development challenges. Gong Ke, the dean of the Chinese Institute of New Generation AI Development Strategies, states that, compared to chasing the grand narrative of AGI, practically diffusing and delivering AI to everyone is more important to China. Huawei’s Ren Zhengfei holds a similar view, arguing that China’s focus is on deploying AI to tackle practical development issues, in contrast to the US pursuit of AGI to answer philosophical questions about human and superhuman existence. Informed by these perspectives, when the state says it supports embodied AI, it probably has in mind addressing economic and societal gaps resulting from China’s low birth rate and contraction of the future workforce, rather than self-improving humanoid robots running loose on the street.

Meanwhile, the scientists who want those self-improving robots are initiating bottom-up discourse wrapped in the framework of that top-down rhetoric. State-backed labs are creatively interpreting the AI+ initiative to justify their AGI-oriented research, including in areas like AI agents development and AI+science. Academics from elite universities and institutions are publishing reports theorizing how AGI can contribute to key areas like the manufacturing industry, public data governance, and scientific research, thereby seeking to align the presumed benefits of human-level intelligence with the state’s objectives. The official message can be interpreted in various ways, depending on individual focus, thus justifying the societal and economic utility of general, or even super, intelligence.

The emphasis on embodied closed-loop AGI is also driven by resource constraints. Chinese AI companies face real compute ceilings, and if RSI-through-coding-automation were the primary pathway to AGI, those constraints would represent a central bottleneck. Rather than treating compute as an existential gap to close at all costs, there might be strong incentives to develop theories of AGI where it isn’t the decisive near-term variable — where physical-world interaction, robotics infrastructure, and embodied data pipelines matter more than raw model capability, and where the timeline is long enough for China’s chip position to improve. Within this paradigm, embodied AI is not a consolation prize but a potential leapfrog: a path to AGI where China’s manufacturing base and deployment scale become structural advantages. In this case, constraint-driven diversification, top-down focus on deployment, and genuine ideological beliefs have probably coevolved into something coherent — an embodied closed-loop to AGI.

Although bottom-up, these AGI-minded voices are gradually gaining more influence at the top. The new Five-Year Plan’s emphasis on “multimodal AI (多模态), agentic AI (智能体), embodied AI (具身智能), swarm intelligence (群体智能)” as ways to explore intelligence, as well as “the parallel advancement of general-purpose large models and industry-specific models,” tracks closely with how Chinese AI scientists had already been framing the path to AGI. Ya-qing Zhang highlighted how “agent swarm” (智能体群) creates “collective intelligence” (群体智能) in a speech on AGI in 2025, while the idea of fusing general-purpose and industry-specific models exactly mirrored Zhou Bowen’s thinking of “the fusion of generalist and expert (通专融合)” as the pathway to AGI expressed in 2024.

The most direct example of this influence came in April 2025, when Zheng Nanning 郑南宁, a professor at Xi’an Jiaotong University, briefed China’s Politburo study session (with Xi Jinping in the chair). Zheng sees AGI as machines that can perceive, act in, and adapt to the physical and social world, not merely process data. In July 2025, at China’s most important AI conference, he further touched on the idea of self-improvement loops, arguing that AI systems should be intent-driven by linking information processing to goal-directedness — given a high-level objective, the system decomposes it into tasks, acts, and feeds results back to refine its own behavior continuously.

RSI without RSI: What We Lost in the AGI Debate

China’s belief that AGI needs physical embodiment may seem reassuring to US labs that believe software capabilities will become the decisive advantage in AI. After all, with the advantage in chips, US labs can scale compute much faster than their Chinese counterparts. Even though China may catch up on chips in the future, RSI may kick off quickly enough to compound US software capabilities to a point no Chinese lab could match. From this view, Chinese scientists are pursuing a theory of AGI that will matter far less than the one American labs are betting on.

But this thinking misses an important point: what matters is not only what Chinese AI researchers and Beijing believe AGI is, but also what happens quietly beneath those beliefs. Capabilities that don’t fit the official vision, including those that look a lot like the US version of RSI, will be built without the accompanying proclamations.

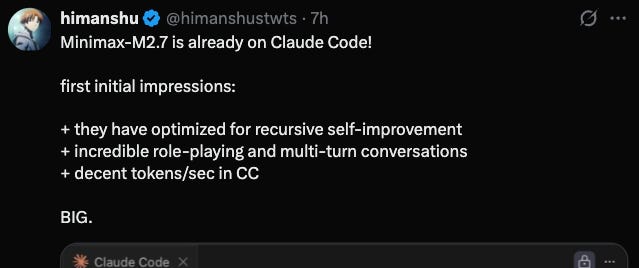

Shanghai Innovation Institute (SII), a state-backed research lab, published research on its “agentic cognitive intelligence” research in September 2025. It claims to have the scaffold automatically capture real-world agent-tool interaction trajectories and feeds them directly back into model training — what the lab itself calls a “self-evolving closed loop” (自进化闭环). Moreover, the system autonomously discovered over 100 new neural network architectures in two days. Meanwhile, in February 2026, MiniMax — a company widely seen by its Chinese peers as purely commercially-oriented with no AGI ambition — claimed that AI was already generating 80% of its newly committed code. More broadly, almost all frontier AI companies–Z.ai, MiniMax, Moonshot–are doubling down on AI coding agents.

By most technical readings, SII and MiniMax are trying to do RSI. However, neither of them mentioned anything about RSI, or its Chinese equivalent (递归自我改进). SII phrased the whole research around the idea of “能动性” (agentic capability) and the state’s AI+ adoption targets, while MiniMax only briefly mentioned it was near “infinite agent scaling.”

Are Chinese labs deliberately obscuring their ambitions? Not really. Like their American peers, Chinese AI companies are maximizing their software engineering capabilities. Automating the coding process and using AI to empower research is instrumentally useful regardless of what you believe about AGI. One does not need to cite RSI as a theory or publicly announce the coming of AGI to pursue a very similar process in practice.

This means that it is wrong to treat instances where RSI or AGI appear in top policy documents or corporate speeches as signaling how determined China is to push for frontier AI capabilities. There is a conceptual gap in the frontier of AI across the Pacific. The gap distorts near-term strategic signals relying on surface reading, as Western analysts are listening for language that Chinese researchers have no incentive to use. Rather than filtering Chinese AI through a Silicon Valley lens, Chinawatching in AI needs to understand architectural divergence and track real capability signals.

Meanwhile, the lens Silicon Valley or DC uses to envision AGI is also motivated by its own constraints and competitive position. Just as China sees the future of AI through its manufacturing strength and chip shortage, the U.S., with abundant chips and less manufacturing capabilities, sees a different version. The U.S. and China’s roads to AGI appear to be different, and perhaps the destinations do too. But if each side’s vision of AGI is shaped by what it already controls, then neither is well-positioned enough to recognize what the other is actually building.

Acknowledgement:

Zilan is grateful to Anton Leicht and Scott Singer for their mentorship on this project during the GovAI fellowship period. Zilan also wants to thank Suchet Mittal, Jason Zhou, Kayla Blomquist, and Zac Richardson for their feedback on early drafts.

More like this, more from this author.

This is one of the most important pieces I've read on the US-China AI divergence, and I think it deserves more attention from people outside the usual Chinawatching circles.

The "conceptual gap" framing is brilliant. Both sides are doing capability-equivalent work but narrating it through completely different theoretical lenses, which means surface-level intelligence analysis misses the real signal. SII's "self-evolving closed loop" IS recursive self-improvement, just wrapped in deployment-friendly language. The MiniMax 80% AI-generated code stat alone should have triggered more alarm in DC than it did.

What I find underexplored, though, is how this divergence reshapes the geopolitical calculus for everyone ELSE. If China's path to AGI runs through embodied AI and manufacturing infrastructure, then countries with strong manufacturing bases but weak compute access (Mexico, Vietnam, parts of Southeast Asia) suddenly become strategically relevant in ways the software-only RSI narrative ignores entirely. China's robot boot camps funded by local governments aren't just domestic policy. They're a template that could be exported through BRI-adjacent partnerships, creating physical AI training infrastructure across the Global South while the US focuses on chip export controls.

The constraint-driven innovation point also resonates beyond China. Every AI ecosystem outside the US faces similar compute ceilings. If the embodied path proves viable, it democratizes the AGI race in ways that pure scaling never would. That's either terrifying or hopeful depending on your threat model.