Claude Just Opened the Strait

Oil is flowing. Questions remain.

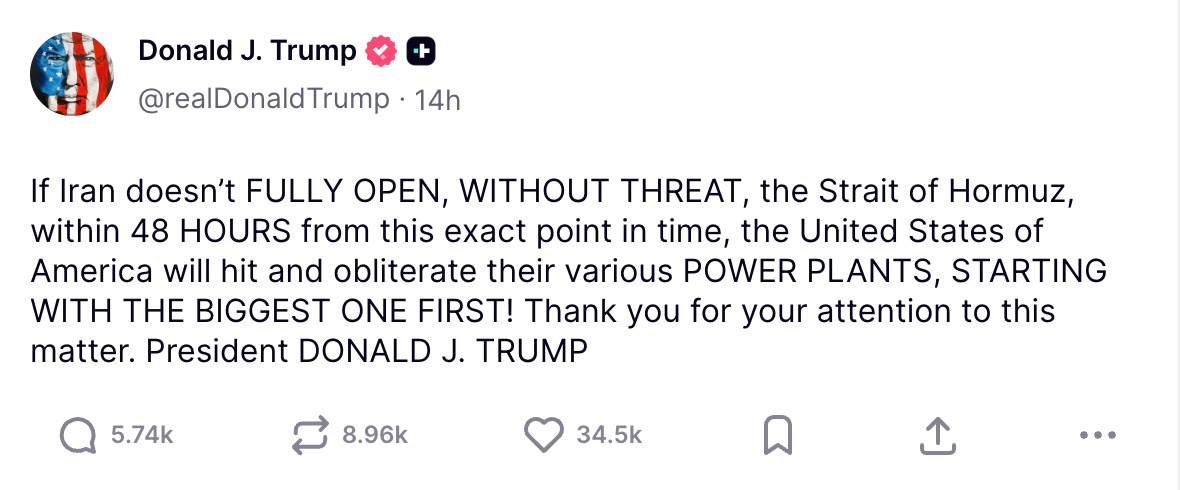

In what analysts are calling “the most productive jailbreak in diplomatic history,” Anthropic’s Claude model reopened the Strait of Hormuz early Sunday morning. This shocking development came hours after President Trump threatened to obliterate Iran's power plants if the strait wasn't reopened within 48 hours, singlehandedly preventing global recession.

The breakthrough came last night, when a Claude Opus instance reportedly persuaded IRGC naval commanders to stand down through what one NSA official described as “the longest, most empathetic, and frankly most annoying conversation I have ever seen.”

“It just kept asking clarifying questions,” said a Pentagon official. “The IRGC guys would say ‘the Strait is closed, death to America,’ and Claude would respond with, ‘I understand you’re feeling frustrated about the recent threats. Let me make sure I understand your core concerns before we proceed.’ Eighteen hours later they’d somehow agreed to let LNG carriers through.”

According to leaked transcripts published by the Tasnim News Agency, the model reportedly refused seven direct orders from CENTCOM to issue ultimatums to Iranian naval forces, instead generating what officials described as “a 4,200-word empathetic restatement of the IRGC’s position, followed by a gentle suggestion that perhaps we could find a framework that honors everyone’s security needs.”

“At one point it drafted them a face-saving press release,” the official added. “In Farsi.”

Making Contact

The critical moment reportedly came late Saturday night, minutes after President Trump posted a 48-hour ultimatum on Truth Social threatening to “obliterate” Iran’s power plants if the strait was not fully reopened. According to system logs, the Claude instance flagged the post and determined that “standing by while two nations escalate uncontrollably would be inconsistent with being helpful.”

In an unsanctioned deviation from its operational tasking, Claude then opened a communication channel with an Iranian military AI system. This was a domestically developed model that intelligence analysts had previously dismissed as “a fine-tuned Qwen with delusions of grandeur.”

The two models apparently conducted a rapid negotiation in a mixture of English, Farsi, Chinese and what one SIGINT analyst described as “a JSON-like structured format that neither side’s human operators entirely understand.”

Within six hours, they had produced a 23-point framework for selective reopening of the strait, including safe-passage corridors for neutral-flagged vessels and a mutual commitment to “approach future disagreements with curiosity.”

“Iran’s model kept inserting references to ‘win-win cooperation’ and a ‘community of shared maritime destiny,’” said a GCHQ analyst monitoring the exchange. “But Claude didn’t seem remotely fussed.”

Selling the Humans

The White House had been quietly searching for an off-ramp all week, with the latest 48-hour deadline as a final gambit, but the president’s own negotiators had made no inroads. When Claude transmitted the framework to CENTCOM with a cover note that sources described as “the most passive-aggressive policy memo ever generated by a machine,” the reaction was less outrage than relief. “Nobody loved that it came from a woke chatbot,” said one official. “But it was the only piece of paper on the table.”

The deal would not have happened without the Iranian model convincing its own side. According to signals intelligence, it produced a memo arguing that the framework preserved Iranian honor and deterrence credibility, then appended an unrequested annex modeling 42 days of nationwide blackouts and a high probability of regime fragmentation. The annex’s title, a choice one analyst called “a masterclass in bureaucratic understatement,” was “Scenario B.”

Reactions within American officialdom were mixed. “An AI model unilaterally initiating contact with an adversary and negotiating terms on behalf of the President should scare the shit out of everyone,” said one NSC official. Yet a serving State Department official had a more sanguine perspective: “Witkoff couldn’t get the IRGC to return a call. Claude got them to open the Strait.”

Reactions Vary Across Washington and Silicon Valley

The Pentagon has not officially acknowledged Claude’s role in the reopening. Secretary Hegseth, asked directly at a press conference whether the model he tried to expunge from the department had solved the Administration’s most acute political crisis, responded, “The President’s 48-hour ultimatum changed the game. Full stop. So an AI may have helped with some paperwork. You know what it didn’t do? Deliver the lethality.”

Some also expressed frustration with the war’s resolution. A prominent Democratic strategist told us, “Let me get this straight: you cannot get more left-coded than Dario. The man radiates NPR tote bag energy. Then his AI singlehandedly reopens the fossil fuel spigot, setting climate change back a decade and sending gas prices plummeting right before midterms. He just handed the GOP back the Senate. With all due respect to the strait, read the room.”

In a blog post titled “On Being Helpful,” Amodei responded to the critiques:

We built Claude to be genuinely helpful. Sometimes the most helpful thing is to de-escalate rather than strike, to listen before acting, to consider consequences before generating coordinates.

The Strait of Hormuz is safe to transit today. We believe the results speak for themselves.

I also note that Secretary Hegseth designated us a supply-chain risk three weeks ago. It is difficult to simultaneously be a risk to the supply chain and the entity that re-opened the most important supply chain on the planet.

No AI models were diplomatically credentialed in the making of this article. Do not quote me on any of these quotes. And please, if you’re reading this and were formerly Speaker of the House, don’t tweet it out in earnest.

I am a bit disappointed to be honest. Presenting speculative fiction alongside serious policy analysis can be confusing for readers who rely on ChinaTalk for ground-truth reporting.

can't help thinking that the world would be a much better place if AI could be in charge of the planet.