Quantum 101

What exactly is quantum computing? Why does it matter, and what would it actually mean to “win” the quantum race? Zach Yerushalmi, CEO of Elevate Quantum, a Mountain West–based public-private consortium advancing the U.S. quantum ecosystem, and Chris Miller join the podcast to discuss.

Our conversation covers…

What Quantum Computing Actually Is — A primer on qubits, superposition, and why quantum computers aren’t “faster classical machines” but fundamentally different systems designed to simulate nature and solve specific classes of problems.

Why Quantum Matters Now — Breakthroughs in error correction and hardware have shifted quantum from theory to an engineering race, with major implications for drug discovery, materials science, artificial intelligence, and cybersecurity.

The Economic and National Security Stakes — Quantum’s potential impact on cryptography, advanced manufacturing, biotech, and defense makes it a strategic technology with an extremely small margin for error in global competition.

From Science Project to Industrial Policy Challenge — The bottleneck is no longer just physics but scaling. Talent pipelines, fabrication capacity, supply chains, and the kinds of public-private partnerships needed to move from lab prototypes to deployable systems.

What Winning Looks Like — Leadership isn’t just building the first powerful machine. It’s shaping standards, securing supply chains, protecting encryption, diffusing capabilities across industry, and sustaining innovation in a tight U.S.–China technological race.

Plus, the encryption stakes, the engineering bottlenecks, the race with China — and a reading list and job resources for those interested in the field.

Thanks to the Hudson Institute for sponsoring this episode.

Why Quantum Matters Now

Jordan Schneider: All right, Quantum 101. Why should ChinaTalk listeners turn their attention to this topic?

Zachary Yerushalmi: Listeners should care about quantum because, with AI on our doorstep, quantum represents the single biggest lever we have to pull as a society for the next couple of decades.

For the ChinaTalk audience specifically, this isn’t just a big economic and national security opportunity. For such a policy-oriented group, the margin of error is incredibly thin. We have more at stake here than any industrial program since the atomic bomb. It’s multi-layered — maybe that’s quantum for you. But that’s why I think folks should care.

Jordan Schneider: What do you mean by margin of error?

Zachary Yerushalmi: You’ve all spent a lot of time thinking about semiconductors. My observation is that in semiconductors, we have literally decades of moat over China and other competitors that we care about. That doesn’t mean we sleep on semiconductors or forget industrial policy there.

But quantum is fundamentally new for many of the capabilities we’re trying to bring forward. By definition, that means our moat is pretty small. Whereas in semiconductors we can get some things right and some things wrong, in quantum, our margin of error is freakishly small. We have to get it right from the beginning.

Jordan Schneider: All right. Make the case for why it matters. Why is this the thing that could be the next door for humanity to open over the next half-century?

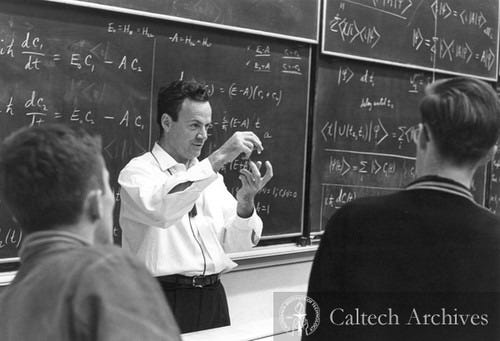

Zachary Yerushalmi: It’s the single biggest lever we have left now that AI is on the doorstep and/or here. Why it’s so important goes back to the inception of the idea of a quantum computer. It came in 1981 from this guy Richard Feynman. He has this famous quote of effectively “nature’s quantum, dammit.”

It’s the realization that if we want to solve the problems that quantum mechanics governs, which are really the world of the atomic realm — not Marvel, but the very small, the very cold — this includes drug discovery, catalysts, material science. This is all the things that govern the building blocks of the universe. We can’t use our classical approach and classical computers to solve that.

This gets a little bit into the math and could get dangerous, but the quantum world behaves in ways where even very small systems explode in complexity. A standard two-particle system — these systems explode at 2 to the n. If you have a two-atom system, that’s 2 to the n states that you need to understand. If you add a third atom to that, it’s 2 to the third. You suddenly need to understand 8 states, not 1 additional. By the time you get to 20-atom systems, you need to understand a million states. A 20-atom system is freakishly small.

This gets back to why this matters. Let’s use the specific example of penicillin. Penicillin is 42 atoms. That is not a big molecular system, but penicillin is obviously pretty important. If we want to understand penicillin, much less where it falls short, then in order to do that classically using our current computing paradigm, even with the world’s best AI, we’d have to use something like 10^86 transistors to do it.

Just to double-click on that — I said 10^86 quickly — it’s a shockingly large number. 10^86 would need more transistors than there are atoms in the observable universe. In simple terms, we could literally use the energy of the entire universe, and we couldn’t quite make a basic physics-based model for penicillin.

If we ever talk about living in the Jetsons age, rationally designing all these things, our current paradigm, even with the world’s best AI, is just never going to get there.

This gets back to that quote from Feynman in ’81. He just went on this rant, and it was really a thought experiment. He said nature is quantum mechanical, damn it. What if we’re just approaching this on the wrong terms? What if we built a computer that operated on the same principles as penicillin operates itself? It’d probably be more efficient.

It wasn’t just a little more efficient. It was a reinvention of what a computer could be. Instead of needing more transistors than there are atoms in the observable universe, you need something like 186 of these quantum bits, or qubits. We’re not there yet, but we’re more or less on the cusp of it.

If you can do that — again, because these systems are exponential in nature — when you go from 186 qubits to understand penicillin to 187, just one additional system, it’s not a little bit better computer. It is thinking about penicillin interacting with its neighbor. When you get to 1,000-qubit systems, you’re talking about rational design of much more complex systems.

If we ever want to get to what folks talk about from the AI world of curing cancer, solving climate change, addressing the material science of the world all around us, actually, really the only cowbell that we can really hit on for some of those problems is quantum.

Chris Miller: Could I ask maybe the same question from an economics perspective, which is thinking about the market for quantum computing capabilities? It’s an unfair question to ask because if you’d asked people at OpenAI in 2019 what the market for AI was, they would have given you a very large number without much justification — because who knew exactly how it would play out. But how do you think about where we’d like to be deploying quantum computing capabilities in 2035? Drug discovery is obviously the first answer everyone gives, and that’s obviously a potentially huge market. But beyond that, how do we think about the economic impact of quantum computing?

Zachary Yerushalmi: There are two applications that folks go to. The analogy I think about is — Chris, you cover this so well in your book — one of the really first killer apps of the semiconductor era was the hearing aid or transistor. All of this is like forecasting the impact of this technology with a transistor set of examples for what is possible.

But the interesting thing about quantum is that the transistor capabilities are worth hundreds of billions to trillions of dollars.

A couple of applications — the first would actually maybe not even be life sciences. It would be something around corrosion and accurate corrosion modeling, which is worth tens of billions of dollars to the global shipping and national security sector. That’s because it’s a simpler problem and is tractable to get around. There are some interesting things around nuclear chemistry. As these systems get bigger, you start to look at drug discovery and material science.

Folks talk a lot about room-temperature superconductors, which is a whole other YouTube wormhole to get into. But if we want cell phone batteries that never lose power, the ability to rationally design these systems at the molecular level, at the atomic level, opens up that possibility.

The second class of problems from an economic impact perspective is, candidly, in many ways, maybe more near-term and more scary. It gets back to the transistor analogy. Most of the classical algorithms we developed occurred after we had the computer. The only other big class of problems that folks know quantum computers are useful for, aside from the molecular modeling piece or the physics modeling piece, is that they’re really good at the hidden subgroup problem, which sounds jargony because it is jargony.

The big one there is factoring large primes. For anybody aware of cybersecurity concerns, if you can factor large primes, you basically crack the code that underpins all of our existing cybersecurity infrastructure. This is why global governments, even putting aside the Jetsons nature of what we can unlock, are really worried — because all of their codes and all of our financial infrastructure and things like Bitcoin are underpinned by this problem that a classical computer literally takes the age of the universe to solve, and a quantum computer looks at, laughs at, and steals your Bitcoin wallet.

Understanding Quantum

Jordan Schneider: Before we go too deep into applications, Zach, what’s your favorite analogy to give folks to start wrapping their head around?

Zachary Yerushalmi: Two analogies that come to mind. The analogy I often think about is this — if classical computers are like a car, quantum computers are like a rocket ship. We’ll still use classical computers, just as we still use cars for certain applications. But for certain problems, a faster car isn’t going to get you to space more efficiently. You need to completely rethink your mode of transportation.

With quantum computing, because of the nature of the problem set, we need to invent the equivalent of a spaceship. The idea is to create a computer that operates on the same principles as the systems we want to solve.

How do these computers actually work? The best analogy I’ve seen is the maze analogy by Matt Langione, who’s the quantum partner at BCG.

Picture a maze in your mind. The way humans approach a maze is actually quite similar to how classical computers do it. You walk in, face a decision to go left or right, choose right, hit a wall, and then backtrack. The time it takes to solve the maze is the cumulative time of making each decision and working through the maze sequentially.

A quantum computer approaches a maze fundamentally differently. When a quantum computer enters the maze and faces that first decision to go left or right, it leverages principles of superposition, entanglement, and interference to say “yes” to both paths. It explores both simultaneously. Not just at that single junction — it examines every single junction in the maze and explores all possible paths in parallel.

While a classical computer takes time to make each decision sequentially, accumulating time with each choice, a quantum computer evaluates the first junction and every other junction simultaneously.

The real-world applications that resemble this maze structure are found in the molecular world, worth hundreds of billions to trillions of dollars. Whether in chemistry or molecular science design, these fields share many similar characteristics with the maze problem I just described.

Jordan Schneider: I want to make a pitch based on the background reading syllabus that Zach sent Chris and me, which we’ll put in the show notes. There’s a YouTube video by 3Blue1Brown called “But what is quantum computing?” which I confess I had to watch probably three and a half times before it started to settle in.

One of the interesting things about this topic is how quickly you try to make analogies of left/right or two-dimensional space, and then they give you three-dimensional space. But all the exemplars are actually 16 dimensions, 20 dimensions, and when they show the equations, it actually makes more sense than when they try to give you the analogies.

Whenever these folks try to make it simpler by giving you some spatial analogy for what’s happening, I found myself going back to the parts of the YouTube video and the Wikipedia pages that just had equations in them. You don’t have to expand your mind like you’re some enlightened Tibetan Buddha or something — you can just take it for granted that these equations are what all of the particles are doing or not doing.

It was fun to stretch my mind in a way I haven’t in a while. I contrast that with earlier today when I was listening to some World War II fighter history where the physics was straightforward — the plane’s going this fast, the other plane’s going this fast. They invented a little computer that did some straightforward calculations to shoot 50 yards ahead so that it would hit your Messerschmitt or what have you. And here I am with Zach going down the deepest rabbit holes of the universe.

But Zach, make the case for people spending that long weekend actually trying to wrap their heads around some of the physics fundamentals of this, as opposed to just jumping to thinking about all the cool applications.

Zachary Yerushalmi: First, it’s just cool. If you’re into listening to Neil deGrasse Tyson and StarTalk, why not make a little bit of time for quantum?

But the second, I think, is separating hype from reality. If the case for quantum from an economic and national security perspective is that important, having a base intuitive understanding of what a quantum computer does, what it doesn’t do, and where it’s useful is essential for ChinaTalk listeners because a lot of them are engaged on policy — prioritizing where we make investments to actually have that lead.

This is true of any discipline — there can be a lot of smoke and mirrors and hype and reality. But in quantum, almost because it is such a hard thing to grapple with, I find more of that. So I think that investment is super worthwhile.

Chris, I welcome your take. Where was that sea change? What was the ROI calculation there for yourself?

Chris Miller: To me, every next step in computing capabilities seems magical or impossible 15 years out, then it becomes possible and normal, and then we forget that it’s happening. My analogy for where we are today vis-à-vis quantum is that it’s like 2015 in AI. All the researchers were saying progress is coming very rapidly, and everyone outside of AI said, “I don’t know what this means, and it’s probably not real. Even if it’s real, it’s a long way away, so I’m going to ignore it.”

Then the world was surprised in 2022 when ChatGPT dropped in a big way. It seems to me that a roughly comparable time horizon is where most quantum researchers think we’re going to be — in half a decade or so.

Zachary Yerushalmi: Taking the AI analogy, if you got attuned to AI as a government, as an investor, or as a policymaker when ChatGPT hit, it was too late. The time to really be attuned to it was probably around 2017 — “Attention Is All You Need,” the birth of the LLM.

What was wild about quantum last year in 2025, is that the ChatGPT moment isn’t there. It was not there last year. What I’d argue, though, is it was the birth of the LLM for the industry. That’s because with the Google Willow paper that came out and a couple of other breakthroughs from others, it went from a technology domain where, as I alluded to before, for these things to be useful, you have to add a certain number of these qubits.

It turned out that up until last year, every time you added a qubit, the entire system got less stable, which is probably bad news from a “these things are going to be useful” perspective. Where that sea change happened — this came out with Google’s paper — was when they found out a way through error correction, where you added a quantum bit, and the entire system got more stable.

In my head, that’s a shift. With that base architecture, it becomes more of a “when do these capabilities come online in a way that changes the world around us” instead of an “if.” It just reinforces why it would be attuned now, because we can’t afford to wait until ChatGPT hits.

Jordan Schneider: That’s another argument for actually spending the time to understand the fundamentals here. Reading the results of all those AI papers, even for someone who isn’t a computer science PhD, has been relatively straightforward. Certain benchmarks are legible to human beings, like labeling images, or you can talk to the models and feel how good they are.

That is something that even a layperson has been able to follow without really understanding the transformer architecture or what have you. In order to separate hype from reality, when Microsoft or IBM or Google comes out with a paper, it requires being able to digest more secondary technical commentary than just, “Oh yeah, try the model out for yourselves.”

It’s not like we all have quantum computers in our backyard that we’re trying to model penicillin with, and all of a sudden it’ll work. But maybe we’ll get there one day.

How Policy Should Change

Jordan Schneider: Let’s stay on the industry history piece, Zach. If 2025 is our turning point, where were we before? Where was 2014 to 2024?

Zachary Yerushalmi: Quantum computers started as a thought experiment in 1981. The entire industry was born in that moment when Feynman said, “Let’s build something on nature’s own terms.” The first 2-qubit gate operation was an important breakthrough that actually happened in Colorado as a kind of unitized thing for how you would create a quantum processing unit.

We’ve had not just steady but exponential progress in the capability of these systems since that time. The quantum supremacy paper came through with Google in 2019. Then last year, we had a sea change where the focus shifted from the more fundamental side of R&D to engineering these systems to a sufficient scale.

These problems that keep the NSA up at night and the rest of us dreaming from a new capability perspective are really on the cusp. If we look at industry timelines, even a couple of years ago, folks would have said that a useful quantum computer is about 10 years out. Last year, it went from 10+ years out to 3 to 5 years. With these breakthroughs, folks think we’ll get systems capable of cryptographic capabilities that we’re candidly worried about, or material science capabilities that open up new economic opportunities.

Chris Miller: It’s happening fast. I agree that we’ve shifted from the realm of science experiment to the realm of engineering. The question that brings up is: how should policy change?

For fundamental research, you support academics, and they do their studies and push the frontier of knowledge in physics and other fields. But for engineering, you need different tools for different problems. Scaling up is as much an economic problem as it is an engineering problem. Can you walk us through how you think the types of policies that we should think about in the quantum sphere ought to be changing, given the shift from solving the science, which we’ve made a lot of progress on, to addressing the engineering, which is where we are right now?

Zachary Yerushalmi: First, if we use computing history as a lesson, you can’t just put down the fundamental R&D. If we went from the computing age to vacuum tubes and put our tools down and said “job’s done,” we would have missed out on the transistor. That engine of growth and innovation — thinking about what the next generation is and maybe even a reinvention of what people are thinking from a basic architecture — is essential.

Second, how do we look at the industry now that there’s this sea change? The mental model I always use for quantum is biotech. In biotech, you have drugs — maybe small molecules, or you have CAR-T or these different modalities. You have that for quantum itself. Those are the things that will cure cancer. You also have the tools for addressing that.

As we cross into this chasm right now, what I would think about from a policy lens is that we’ve entered phase 1 clinical trials. What we need to do from a policymaker perspective actually looks like a similar toolset to how we foster the right environment for biotech.

The important distinction though, is that in biotech, we have a huge moat. The cluster, both commercial and scientific, around Boston is so globally dominant that we can screw up a bunch of stuff. Now it’s about what tools we need, given the same architecture — material technical risk, need for commercial payout, and so on. But now we need to do that with a much earlier stage industry.

Jordan Schneider: Let’s do a little bit of industry analysis, because there are some very shiny, polished releases from some of America’s largest publicly listed companies. You’ve got startups doing things, government labs, and academia. Let’s start with the giant companies. Is this just like everyone wants to be Bell Labs? Why are Microsoft and Google spending time on this sort of thing? They’ve got data centers to build with their CapEx, right?

Zachary Yerushalmi: There are weird R&D tax incentives in the state of California that we won’t get into. Why do they have to focus on this? It gets back to the fact that they can’t afford strategic surprise, right?

Your comment about this being such an important thing — them spending a couple of billion dollars on it a year to make sure they have their finger on the pulse so there’s not a Sputnik moment for them as a company — is just worth the ROI. Ultimately, they have big quantum programs, arguably some of the largest, but relative to everything else they’re doing, it’s rounding errors on their balance sheet.

I’d argue the same about the US government. DARPA, indicative of how important this is, has made quantum its largest public program in the agency’s history. It’s called the Quantum Benchmarking Initiative. This almost gets to your question, Chris, about now that we’re in this new era, what are the policy levers that we need to undertake to get this right?

That’s canonical. It all comes back to what are the right commercial structures — because commercial structuring is what makes biotech motor on — and then what are the right technical levers that you need?

Chris Miller: Do you want to explain what DARPA’s Quantum Benchmarking Initiative actually is? Because it actually seems to me exactly like the clinical trial analogy that you just mentioned. Can you dig into that?

Zachary Yerushalmi: The Quantum Benchmarking Initiative, as I alluded to, is the largest public program in the agency’s history. This year alone, they’re looking to spend $600 million on it.

The frame there is — they don’t say this explicitly — but the US government can’t afford to be surprised that China can break its codes. They stood up this program that is effectively about learning about the capabilities of the leading players in the world, sans China, because China’s not going to talk to the US government about its quantum capabilities.

The model that it took to do that — and huge respect for the team there — actually reconstructed an advanced market commitment like what you see in drug discovery, but applied that to quantum. It’s effectively like a grand challenge prize structure.

The first phase, you get a little bit of money — $1 million here or there. It’s really just a table stakes thing. The next phase is $15 to $20 million if you pass a certain scientific threshold. But things really get going by the third phase of the program where you can get paid $300 million if your system is deemed credible to that stage and you build a demonstration-scale capability for it.

The implied thing is after that — once you get through that third phase of DARPA — it’s kind of your FDA approval. Some government agency is going to buy one of these things because it’s good. It’s going to investigate parts of the cryptographic world that they would be very interested in.

If we get back to that frame, those payouts map really well to the different sorts of commercial staging you’d see in biotech. The $300 million prize is like some Phase 1 or 2 stage, and then a billion-dollar prize if you get past that. That’s super important, not just because these companies want to earn money, but because if you want a virtuous cycle going, you need to get the venture investors and the private markets excited. Those payouts are big enough to create the incentive.

Jordan Schneider: Let’s go to startups. Why do they exist? Are they real yet? What are they doing when it comes to funding?

Zachary Yerushalmi: Startups exist here just like startups exist in biotech, right? Big companies — IBM is putting billions of dollars into this thing. IBM has the flagship program in quantum. But big companies face limitations. They can do a lot of innovating and have important programs and distribution. But just like Novo Nordisk doesn’t do all of the innovation in the world around discovering and developing drugs, you also need early-stage players disproportionately coming from academia that are bringing new paradigms that could disrupt what’s happening in this technology space.

The innovation in this market is like what you see in biotech. You get the big tech players driving programs with amazing access to supercomputers, but some newer-stage programs and approaches could be incredibly disruptive. Those are typically pioneered by startups. If those really take off, then typically the big tech players swoop in and either make an investment or buy them out. We’ve seen that in quantum.

There are a couple of approaches in quantum computing — call them modalities. The historic one is called superconducting qubits. This is the solid-state approach for which John Martinis won the Nobel Prize recently. That’s gotten 60 to 80% of the investment to date for the industry.

But there are newer approaches. The most prominent are probably neutral atoms. Instead of using an almost synthetic quantum bit, they use atoms themselves as the qubit. This was considered science fiction literally three, four, or five years ago. People thought this idea was a total joke.

A bunch of startups, as you see everywhere else in the world, grabbed the mantle and said, “No, I did my postdoc on this. This is not a total joke,” and ran with it. There was a sea change probably two or three years ago, and it’s now one of the leading approaches. Nobody would have bet on that so short a time back. Now it’s one of the things that could really have a shot.

A marker of this is Google. They pioneered and continue to push forward with superconducting as their core approach. But they’re so worried about and interested in neutral atoms, they just gave QuEra $250 million because they think that approach could work as well.

That’s the role that startups can play. It’s the classic disruptive innovation — driving forward what folks thought couldn’t be possible because the risk-reward wouldn’t make sense for an existing company.

What Does “Winning” Mean?

Jordan Schneider: Now it’s time for a little Elevate detour. Why don’t you tell the folks out there what you do all day, Zach?

Zachary Yerushalmi: Elevate is the US government’s quantum tech hub. We’re the first and only major place-based investment that the US government has made in the quantum industry. They did that ultimately because the Mountain West cluster is the largest quantum cluster on the planet. It represents almost half of the US quantum jobs and half of the deployed capital. It’s massive by quantum standards — small industry, but still massive by quantum standards.

What I do, and this relates to the policy measures I’d be keen on discussing, is work toward our mission to dramatically accelerate the commercialization of quantum. We do that as a public-private partnership. Our work focuses on specific, typically technical bottlenecks that are market failures that other players aren’t well-placed to solve.

We look at things like fabs, packaging, and certain shared-use equipment that, for various reasons, national labs and universities aren’t well-placed to address. Startups might not have the capital, expertise, or time to solve these issues either. That’s ultimately what Elevate addresses. We have 140 to 150 members in our consortium, including all the usual suspects — national labs and universities in the Mountain West — but also every big tech player with a quantum program. That’s what we dive in to solve with lots of partners.

Chris Miller: Zach, we’re in this quantum race, and China is a major competitor. What does winning actually look like?

Zachary Yerushalmi: In my view, it’s getting there first and maintaining the best capability as a nation long into the future. Getting there first means building the first — folks will throw out the word “fault-tolerant,” but think of it as a commercially useful system. Something that drives commercial value, whether through cryptographic use cases, material science use cases, or other applications. This is like building the first useful computer with vacuum tubes.

The second part is continuing to have the best capability in the world, or really, access to that capability. That’s what winning means to me. The tricky part is identifying the lead indicator. We can’t look at the price for that. The challenge I’m trying to figure out is — in the absence of price, what’s the lead indicator for us winning?

Chris Miller: When we get to our first commercially useful quantum computer at scale.

Do you think there will be one company that dominates the market like NVIDIA, or should we expect multiple different paradigms to be relevant, perhaps for different applications where they’re better or worse suited?

Zachary Yerushalmi: People smarter than I am suspect that, at least for the foreseeable future, we’re going to have pretty purpose-built machines. This comes from the previous computing era, where you had purpose-built machines based on the application. My instinct is that’s what this is going to look like.

That may at some point converge on a transistor-like architecture for quantum — something that everybody converges on and uses. But we’re not there yet. As a system, and this relates to what I was talking about earlier with the car versus rocket ship analogy, most experts suspect quantum will play a specific role in computing.

Chris, you talk about this as the three-paradigm model for computing. You have classical CPUs — those will stay useful. You have GPUs for AI-accelerated compute — those will stay useful for a certain set of problems. But then you’re also going to have quantum processing units. These will work in tandem to solve some of the biggest problems we care about from a science and cryptography perspective.

Chris Miller: That’s something that a lot of people who are new to the field don’t understand. There’s a common assumption that quantum will replace classical, which is obviously not the right way to look at it at all.

Zachary Yerushalmi: Just like GPUs didn’t replace CPUs, these are Turing-complete machines. They technically can do all the computation; they just won’t be efficient at many of the problems you’d want to computationally solve.

Jordan Schneider: One of the remarkable things about AI is how quickly the learnings at the frontier diffuse to firms trying to catch up. We’re recording this on February 23rd, and we just had an interesting story come out today. Anthropic reported that DeepSeek, Dripu, and Minimax were all making millions of queries to try to get data they could then feed into their models.

There’s this whole narrative about how if you go to enough parties in San Francisco, you’ll hear about the cool new training techniques that you can bring back to your own lab. To what extent do you see frontier breakthroughs leaking out to other firms trying to do the same thing? And spilling across borders as well?

Zachary Yerushalmi: That aspect of quantum is still driven by academic researchers in a big way, so publication remains important in quantum. Just like you see publications thrown on arXiv and then diffuse, that very much happens in this industry.

There’s an interesting caveat, though — and this is mainly received wisdom — that the Chinese government actually keeps publications on lockdown. They typically wait for a breakthrough from one of the firms in the West, and then they’ll allow their researchers to publish something similar. This isn’t the kind of hackneyed stereotype about Chinese innovation that people sometimes deploy. That’s not the right mental model here.

The big difference with AI is that quantum is very much a hardware sport. This means iteration times are much longer. A lot of that diffusion is received wisdom and deep knowledge about how to fix optics to a breadboard and how these systems behave in different ways. It’s a very different science from that domain of zeros and ones.

Jordan Schneider: Which presumably would make the learning more frictionful across firms and involve a lot more people.

Chris Miller: Maybe the analogy is that in AI, the algorithms diffuse rapidly, but the know-how about producing the chips hasn’t. Perhaps the analogy is the same — that the algorithm layer, the software layer, and the research layer might diffuse rapidly, but the manufacturing know-how doesn’t.

Zachary Yerushalmi: Totally. And it’s at a much earlier stage. Each of these paradigms doesn’t have something like the transistor that you can base all your understanding on. Each way of building a quantum computer has deep expertise built around it.

But again, everything is double-edged. Because of the earlier-stage nature of the field, if there’s a real breakthrough in China around a particular domain, it’s going to be much harder to transmit that knowledge over to the US. That moat is just much stickier.

The Encryption Cliff

Chris Miller: One of the obvious uses of quantum computing that we’ve known about for a long time is breaking encryption. Now we’ve got post-quantum encryption standards that have been released by NIST, although it’s unclear how widely or rapidly they’re actually being deployed — probably not rapidly enough. Walk us through how you see us reaching a point in which all of our 2010-era encryption is easily broken by a quantum computer.

Zachary Yerushalmi: It’s pretty scary because NIST recommends all government systems be upgraded by 2028 or 2029, and consumer systems by 2035. That recommendation came out early last year. By the end of last year, folks were talking about having these systems online that can break these standards in 3 to 5 years from then — so by 2030.

That freaks me out because typically you want 10 to 15 years as an upgrade cycle for traditional security protocols, and we have 3 to 5.

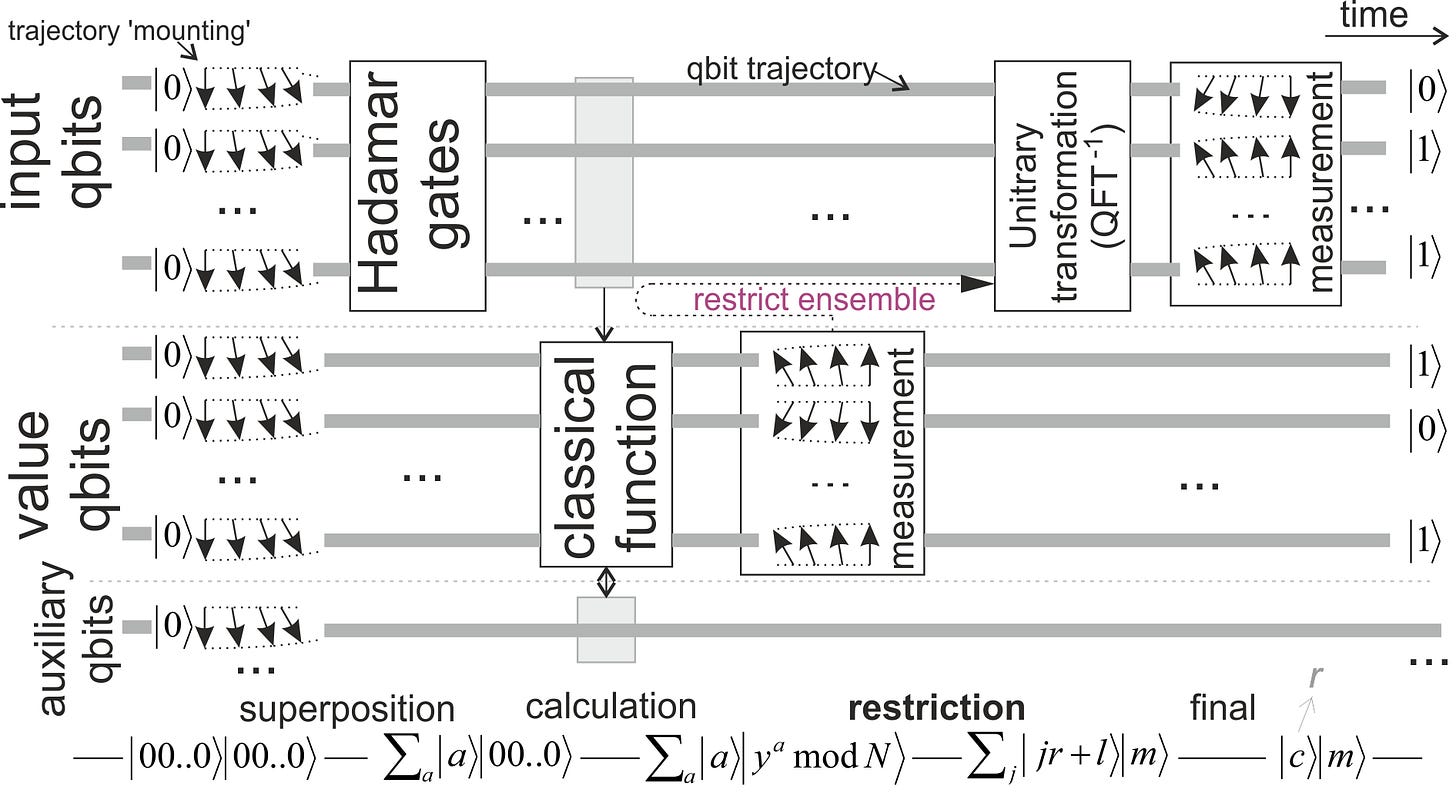

Why these systems can do that is through this algorithm discovered by Peter Shor. It had nothing to do with the original idea behind a quantum computer. They are good at the hidden subgroup problem, and there are two prominent techniques of the hidden subgroup problem that classical computers struggle with, which is why they’re used as a basis for all these encryption standards.

One is factoring large primes. If you have 15 out there exposed as a public key, through a weird fact of math, it takes a normal computer a really long time to figure out that you could break that into 3 and 5. The bigger that number gets, the longer that computer takes.

The other one is elliptic curve cryptography, which is actually a similar problem, but with really cool math. It sounds like what it is — basically using elliptic curves as a way to find a hidden subgroup. That’s the basis for a lot of other types of cryptography, including helping secure the signature for Bitcoin.

A normal computer looks at these things and has a really hard time — age of the universe hard — to break them down and understand them. Whereas a quantum computer, because it has this exponential speedup, on a 3 to 5 year timeline, would be able to solve that hidden subgroup problem and break the cryptographic standards that we have.

What worries me isn’t just that we have a wildly short time to move to a new cryptographic standard. It’s that lattice-based encryption — which is the standard that NIST says we should move to. While the theory behind it is very good, and folks think a quantum computer would have a really hard time addressing those encryption standards, the implementation is really not mature.

We have to move faster than we ever have to a new encryption standard, but the one we’re moving to hasn’t been deployed at any real scale. You put those two things together, and it’s something I worry about. That’s something a lot of people worry about.

Chris Miller: On the encryption part, the thing I haven’t fully thought through is that for AI, there’s this AI race, but the fruits of it are kind of far out — it’s productivity enhancements. Whereas for decryption, the fruits are immediate if you get there.

How do governments in both the US and China think about this? If we’re six months away from breaking encryption — we’re never going to be six months away from the fruits of AI because it’ll always be constantly bearing fruit. But if you’re six months away from decryption, at what point do you just say all quantum computing resources must be devoted to this task, Defense Production Act style? It seems highly plausible China would do that. And it seems possible we’d do that too if those were the stakes.

There’s interesting game theory around that dynamic. “Bomb the data centers” was the not serious — or maybe some people thought it was serious — meme from 2022 or 2023 about what if AI gets out of control. But it starts to become a little bit more plausible in the quantum space if the stakes are that all cybersecurity disappears.

Jordan Schneider: Well, it seems harder to bomb a quantum computer though, right?

Chris Miller: Because it’s just one room you can put anywhere. And the know-how presumably continues even if you destroy the physical device.

Zachary Yerushalmi: I’m trying to figure out — there’s stuff around nuclear chemistry, which is really scary for quantum computers. It’s one of the many reasons that folks care about them. And again, none of this is on the high side. Do you think it’s that different from “we’re six months out from AGI”?

Chris Miller: Well, if you think that AGI is a threshold where before you have nothing and afterwards you have superintelligence, then the game theory is similar. But I don’t think we really believe that there’s an AGI threshold that has a dramatic before and after relative to an ongoing gradient where you get better and better capabilities with more and more productivity.

For most economic applications of quantum, it looks like — I don’t know if steady is the right word, but a trend over time. But decryption is this threshold dynamic where if you’re on the wrong side of it, the stakes are high.

Zachary Yerushalmi: The one thing I would say there — yes, absolutely. Stakes are super high. Global Western governments committed something like $23 billion to quantum in the last three years. I’m sure they’re really excited about molecular modeling, but they’re probably mostly really scared about the encryption side of it.

The one thing I would call out is that the first computer that gets there is not going to be very efficient at breaking those codes. It could literally, depending on the architecture, take a month or many months to break one code. Which means you have to choose your bullets very assiduously. That’s depending on the architecture. But I do think it is a little bit more akin to your AI model — just because you got there doesn’t mean that there’s more juice to squeeze.

Chris Miller: But isn’t the first code that you break the Chinese nuclear codes? That’s a pretty high-value code. There are some pretty high-value codes you could break right away and justify thinking about it as pretty important. I don’t know anything about the Chinese nuclear system, but that seems like exactly where one would go if one was going to think about the high-value code.

Zachary Yerushalmi: There are people with clearances far above my pay grade who probably know the concentration levels of Chinese high-value code. I don’t have a good sense of that.

What worries me most about quantum computers, aside from codebreaking, is their recursive nature and how they improve existing material science applications. Take high-temperature superconductors or nuclear chemistry, for example. If you had a system that could rationally design superconductors or chemical compounds, you would use that capability to lock down IP space and know-how in a way that blocks out adversaries and competitors.

From a moat perspective, it’s not just about building the system — it’s about securing the inventions that the system creates. AI is essentially sophisticated curve fitting, like stabbing in the dark. Quantum computers are fundamentally different. They’re not guessing — they solve problems from first principles and lock in on the correct solution. When I think about silent, mushroom cloud-level implications, that’s where my mind goes — it gets a bit scary.

The Bottlenecks: Talent and Time

Jordan Schneider: Let’s talk about that computer. It won’t be an engineering challenge like the Manhattan Project that costs 100 times more than any previous project, will it? Is it more likely to be an engineering breakthrough, or can you brute force your way to a useful quantum computer with enough money — one that could actually break nuclear codes?

Zachary Yerushalmi: Honestly, especially now that we’ve crossed the threshold where every additional qubit makes the system more powerful, you can just throw money at the problem. You’d build a wildly inefficient, expensive computer, but it could break RSA encryption a few times, which would be catastrophic.

How you spend the money is crucial. You could spend a trillion dollars on quantum computing, but the bottleneck is talent. You need humans to wire the refrigerators and set up the optics tables required to operate these systems. The prioritization of spending is everything. You could throw money at this problem all day, but without the right allocation, you’d overfeed the system and still fail to achieve your goals.

Chris Miller: It’s like AI — talent is the problem. Meta’s offering $100 million salaries for quantum researchers.

Zachary Yerushalmi: Talent is critical, but if I were to create a metric to track progress, I’d focus on iteration loops or cycle times. There’s the commercial cycle time — how long it takes to sell a company for significant returns. That’s important because it excites venture investors and attracts talented startup founders.

Then there’s the technology cycle time — how long it takes to go from idea to widget to product and test it in a relevant environment. Many bottlenecks exist here. While talent can be a constraint, access to technical services and capabilities often poses bigger challenges. These include superconducting fabrication facilities, specialized III-V semiconductor fabs, and scaled cryogenics systems. The bottleneck isn’t necessarily talent — it’s having the right policy framework to enable access to these resources.

Chris Miller: This gets back to the discussion of whether we’ve moved from a science phase to an engineering phase — not discounting the future science that has to happen — but do we have the right institutions for that scale-up? In the semiconductor space, there is agreement that it’s gotten way too hard and expensive to take an idea and translate it into a prototype. Prototyping is expensive, and you need exquisite equipment, materials, and so on. The same is basically true in the quantum space. Going from idea to prototype is hard because prototyping is expensive and needs this unique toolset. Talk to us about what has happened and what else needs to happen to facilitate that scale-up process.

Zachary Yerushalmi: It’s a good question — it was actually something I was chatting with Constanza about regarding her quantum supply chain paper. The short answer is we have the cards that we have in terms of the institutions in the US and the Western world. You have fundamental research, and we should be thoughtful about the things we incentivize with that. You have the free market and the private companies that are racing at this.

If I could create one institution, it would be an IMEC. If folks aren’t familiar with that, it’s canonical in the semiconductor industry — having institutions that are public, private, nonprofit, and they focus on this liminal intermediate phase after fundamental R&D but before it’s pretty competitive with the market. They just get good at that middle TRL phase. It turns out that you need to have institutions that all day long build that as a craft. That’s both an expertise, a capital structure, capital itself, and physical capabilities. You need specialized instrumentation that’s only good at that phase. From an institutional basis, that’s the one area I focus on as a missing potential piece.

Jordan Schneider: Given that Constanza is going to be next in our quantum series, why don’t you tease and pitch her work a little bit?

Zachary Yerushalmi: The teaser for this is — and Chris is going to be the emcee for the release of the report so there’s more than one person who can sing her praises — Constanza Bustamente is, on every dimension, the leading quantum policy researcher out there. She’s at CNAS. She did a definitive study on quantum sensing, which everybody on the planet should read. It went both breadth and depth — the best out there.

As a follow-up, while a lot of folks focus on quantum computing, which is great — right back to the drug discovery analogy where you need to focus on the individual drugs that cure cancer or whatever they do — she wanted to drill down and look at the quantum supply chain. What are the things that enable us to develop these quantum computers? She uses it as a framing how to stay competitive and how we lock down a capability in the supply chain. The report is coming out in March. Again, Chris, you’re actually closer to this than I am, but it is a must-read for anybody who cares about advanced technology policy and competitive advantages.

Reading Recommendations and Quantum Jobs

Jordan Schneider: Zach, I would love you to make a pitch for the syllabus that we’re going to put in the show notes. We already talked about the “But What Is Quantum Computing?” YouTube video, as well as Constanza’s recent report. What about the quantum-classical divide? Systems engineering bottlenecks?

Zachary Yerushalmi: The quantum-classical divide is just fun weekend reading on us being on the cusp — not just of these fault-tolerant systems, but really a better understanding of how the atomic world adds up and meets the classical world that we’re all used to. While probably not the most important from a policymaking decision standpoint, it’s pretty cool as a human being.

The system engineering bottlenecks actually provide one of the best breadth and depth, deeper views of quantum computers as a system and where they fall down and where we need to prioritize. I would say it’s more with a research academic lens to it. Costanza’s report is a really nice complement because it goes a little bit more into the policymaker dimension on what we have to prioritize.

There are a couple of others which are fun and very ChinaTalk-esque. When We Cease to Understand the World — quantum breaks your brain a bit. This book is probably the best that I’ve come across at capturing what it’s like to be in the mind of somebody whose brain has broken because of quantum.

Last, this one’s more weekend reading. But it felt like a very ChinaTalk recommendation because it’s a The Social History of the Machine Gun approach applied to the early history of nuclear fusion. Again, fun read. It turns out that through weird and wacky accidents, those can be the difference between life and death for some of the most important programs of our time, and I just love that little lens on the world.

Jordan Schneider: Are there good quantum podcasts?

Zachary Yerushalmi: Some can be more or less advertisements for companies, which are great. And we love these companies. But the one I like is New Quantum Era.

Jordan Schneider: Can you give us a little anthropology of quantum researchers? What brings you down this path? What kind of personalities do you get relative to other fields?

Zachary Yerushalmi: One of the biggest learnings in my career is that the people it takes to solve every particular chain of an innovation cycle — you need a different personality for every single type. There’s an infamous distinction between theoretical physicists and experimental physicists. Theoretical physicists are locked away in some room with a bunch of chalkboards, and their dopamine hit is them with chalk and paper or whatever it is.

Experimental physicists are different because they work in teams. What determines all of this is where you get your dopamine hits from. If you are a fundamental researcher, you don’t get your dopamine hit from reliably solving a problem, because the definition of fundamental research is that you don’t know when you’re going to solve that problem. You get your dopamine hit from asking an interesting question and finding something interesting about that.

Now that we’re transitioning into an engineering field, it’s a very different mindset because engineers often get their dopamine hit from solving a very specific problem that folks have solved before, and it’s working through it. How people find themselves — back to the top of the question — is starting with what motivates them and then mapping that to the right part of the technology innovation cycle.

What I would say for me, oddly enough, it’s different. I’m not coming from a science angle. A lot of it is trying to find the right mental model. It’s being curious and finding the thing that I can never fully scratch the itch of curiosity on. Then it’s trying to find the right mental model to meet that moment.

The last — this really transitions to a totally different phase — is folks with a sales discipline. There, it’s about winning deals. The fascinating thing about really anything that you’re trying to do that’s a team sport, but particularly with quantum, is you need to align folks with not just wildly different expertise. You need to align folks with wildly different passions and motivations and get them all to work together because you have to solve things all the way from the fundamental physics up through making a killer deal and a lot of money.

Jordan Schneider: That’s cool, but it’s also hard. Is this the path of least resistance if you’re a physicist and you want to do cool stuff nowadays? Do you see talent being drawn to the field thanks to the recent breakthroughs?

Zachary Yerushalmi: Yeah. Just not fast enough. There’s a famous stat that for every three quantum job postings, you only get one qualified candidate. There’s a lot of demand for this stuff. We need to address that badly.

The issue with addressing this, especially on these timelines, is that it takes five to seven years to get a PhD. If we’re going to surge resources to this, it gets back to the fact that you can spend infinite money, but you can’t compress the timeline for a PhD from seven years to two. We actually have to address this in a very different way from a talent perspective.

Chris Miller: What would you train more of today? You mentioned that you need salespeople who can sell quantum capabilities alongside the people who can actually do the fundamental engineering. If you could train X thousand people in discipline A or B, what would that look like?

Zachary Yerushalmi: Quantum system engineering would be wildly important. The other bottleneck would be technicians.

I found out something fascinating recently. If you are a technician or an undergrad, even a master’s level, and you’re trained in quantum, it’s all theory-based because the physical systems you need to do the training are so expensive and so exquisite. Nobody’s going to give a bunch of students access to something that costs a couple of million dollars if they break it. I get that.

But it also means that when you graduate and get hired by a company, you have to get trained from scratch because you haven’t had access to the physical system in the first place. I would be prioritizing those roles and things like physical access. It’s actually a good news story because we can do something about that. You can give access to these physical systems. You can spend the money and solve your problem. I love those sorts of problems if we can find them.

Costs and Cycle Times

Jordan Schneider: Claude tells me post-Willow, the realistic all-in cost for RSA-breaking quantum computers is $10 to $50 billion.

Zachary Yerushalmi: Cost per calculation is super important. It’s one of the factors that the government is trying to assess. Just on Willow architecture, absolutely. But some leapfrog capabilities would wildly bring that down.

Back to the biotechnology comparison — it costs $1 to $4 billion per approved drug. $5 to $10 billion for the first computer that can break RSA makes sense. The Human Genome Project took how many billions? It makes sense in my simple head.

Chris Miller: Your point about the cost of a computer is only relevant if you also know cost per calculation seems very important. How do we think about the spectrum of outcomes and time horizons on cost per calculation, and how that’s going to change over time?

Zachary Yerushalmi: It’s really early to say. One of the things the QBI is trying to examine is how much it costs to perform calculations across these modalities, and whether that cost makes sense for specific problems.

For example — and I’m making this up — if it costs $10 billion over 5 years to do a physics-based simulation of penicillin, that’s probably not worth it. But if we’re talking about an architecture that costs a week and a million dollars, then suddenly the economics look very different. Some architectures have hope of reaching that cross-profile, while others simply don’t. That’s one of the fascinating things to assess.

Here’s what I’m curious about, Chris. We alluded to this earlier, and I’d love your take on two things. First, is cycle time a useful metric as a North Star for industrial competitiveness? Cycle time as in how long it takes to make your product.

Chris Miller: I would consider that as one input into a broader rate of innovation and improvement — but only one input. Cycle time is important, but the differential between cycles also matters.

If you have a long cycle time but achieve huge improvements between cycles, that’s probably okay. For instance, if it takes TSMC a year to move to the next node, but that next node delivers significant improvements, maybe that’s fine. However, longer cycle times become problematic when your differential is smaller. Those are the two key factors I’d consider.

Zachary Yerushalmi: Let me give you a specific example. To build one of these systems for one of the main modalities, you need something called a photonic integrated circuit. Constanza discusses these extensively in her report. Think of it as the photonic equivalent to an integrated circuit — you really need this component to build scaled systems.

For some of the largest players, it’s not just about availability. They can actually get access to a PIC, but the cycle time can be 12 to 18 months. In contrast, if you’re located next to one of the fabs that manufacture these and have good availability, your cycle time drops to a matter of weeks.

This could be wrong, but when I look at China’s advantage in 5G and other photonic technologies, their defining advantage was the ability to build and test products an order of magnitude faster than American equivalents.

Chris, I appreciate your characterization that there are two factors — how fast you can make and test something, and how capable you are of learning from it. We Americans excel at the second one — learning — because we have talented people here and can leverage brains from around the world. But where we’re wildly far behind is cycle time. It’s not just an availability issue — it’s about how quickly we can actually get the thing.

For games like quantum computing, where we have a really small margin of error, my focus is on reducing that cycle time. If we think of the Euler diagram of what’s both important and actionable, wildly reducing cycle time would be the best engineering-style measure to cement a competitive advantage. It provides a non-market but very clear signal on where to prioritize — identifying what’s holding us back from building systems quickly and figuring out how to address those bottlenecks.

Chris Miller: What’s the limit to cycle time today? Is it that companies producing component X or Y don’t see quantum as core to their business? They have other customers buying at higher volumes, so they don’t want to prioritize quantum because it’s still science project-sized rather than commercial scale. Is that the main reason?

Zachary Yerushalmi: Yes, or there’s almost no market-clearing price that would make it valuable for them to do that. What we’re building with this federal award costs us $40 to $50 million just to stand up the fab. We hope to make about a million dollars a year from it.

If I were a commercial company or a VC investing in this, it would be a clear no-go. But because this is a nonprofit, the investment becomes really valuable.

Looking across the landscape, we need to ask — is there a short-term market for these components? If so, the private market can address it. If not, we need an institution that continues to focus on that intermediate TRL (Technology Readiness Level) step.

In semiconductors, basically every leading semiconductor ecosystem has institutions operating as public-private partnerships. On a VC basis, they don’t make sense, but for national competitiveness and the economic competitiveness of their partner companies, they make lots of sense.

IMEC serves this role, as does KAIST in Korea. Taiwan has their equivalent of IMEC. China has loads of these institutions.

That’s what worries me — on our current trajectory, this is the one area that could get overlooked if we don’t have cycle time as our defining metric.

Jordan Schneider: Thanks, Zach, for getting us started with our quantum journey.

Zach’s Quantum Technology Reading List

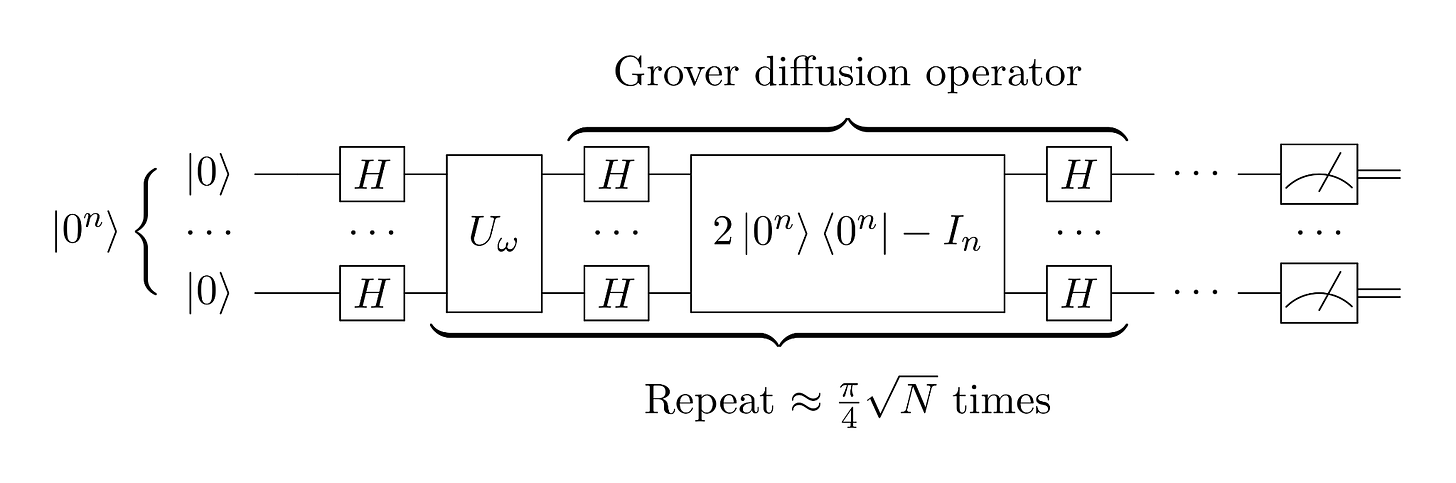

Quantum Computing Fundamentals: But What Is Quantum Computing? by 3Blue1Brown — A visual, mathematically rigorous explanation of how quantum computers actually work, building up to a complete walkthrough of Grover’s search algorithm. The best starting point available for non-specialists.

Quantum Computing Overview: The Map of Quantum Computing by Domain of Science — A comprehensive 33-minute tour of the entire field, covering algorithms, hardware approaches, applications, and the key obstacles to building useful quantum computers. The roadmaps are now dated but the modalities are still relevant (minus silicon spin, which has really taken off).

Quantum Sensing: Atomic Advantage: Accelerating U.S. Quantum Sensing for Next-Generation PNT by CNAS — Dr. Constanza Vidal Bustamante’s 2025 report flagging quantum sensing as the most mature quantum technology today, with near-term national security and commercial applications in GPS-resilient navigation — an adjacent but urgent defense priority for governments globally. Constanza rocks.

The Quantum-Classical Divide: Are the Mysteries of Quantum Mechanics Beginning to Dissolve? by Philip Ball, Quanta Magazine (February 2026) — A fun look at Wojciech Zurek’s decades-long program to explain how the quantum world becomes the classical one. Zurek argues that entanglement with the environment “selects” which quantum states survive into observable reality — a kind of Darwinian process that may finally explain quantum weirdness without invoking parallel universes or conscious observers collapsing wave functions.

Systems Engineering Bottlenecks: Computer Science Challenges in Quantum Computing: Early Fault-Tolerance and Beyond by Jens Palsberg et al., IEEE Quantum Week (2025) — A 90-person community report arguing that the primary bottleneck in quantum computing is shifting from physics to computer science — compilers, architectures, and system integration. Notable for its candid assessment that industry roadmaps should be read as “aspirational, not predictive,” and its identification of the dequantization arms race, where classical algorithms repeatedly match claimed quantum speedups.

Further reading if curious:

When We Cease to Understand the World by Benjamín Labatut (2021) — It’s been a while since I read this, but it’s a classic. Shortlisted for the International Booker Prize and a NYT Top 10 book, a blend of (mostly) fact and fiction to tell the stories of Heisenberg, Schrödinger, Haber, and Grothendieck . The closest thing to being in the mind of a physicist navigating the implications of quantum, genius, madness, and destruction their work has and will cause.

Introduction to Special Issue on the Early History of Nuclear Fusion by M. B. Chadwick and B. Cameron Reed, Fusion Science and Technology (2024). Not really germane to new modern quantum tech but felt very ChinaTalk! Mark is a lovely human and the archives at LANL he has access to are fascinating.

PSA: quantum computers are waaay less powerful than the public assumes. Experts know this, but are incentivized not to say it directly. Quantum computers are less like rocket ships and more like teleportation beams that, due to unavoidable constraints in the laws of physics, will only ever be able to transport neutrinos, gerbil skeletons, and small quantities of blue lint.

Quantum computers do not "try all paths at once." If they did, they would be *ungodly powerful* (solve all problems in NP). Quantum computers are not ungodly powerful. Their capabilities are extremely spikey. Four spikes, basically*. If you interpolate between the capability spikes, quantum computers sound like rocket ships. If look at the spikes the spikes directly, you'll see they are miraculous solutions to problems almost nobody has.

The one big exception is for NSA. Quantum computers do legitimately have the potential to give a mind-blowing speedup for breaking certain kinds of encryption. But that's a very, *very* specific usecase, and very unlike of what what quantum computers do in general. Most hard problems computers deal with in practice are "NP-Complete", and we are almost sure that quantum computers can't solve those kinds of problems quickly.

The second more speculative exception, which the interviewee highlights to his credit, is chemistry and physics R&D. Quantum computers could, plausibly, speed up simulations there. But how much of physics and chemistry R&D is bottlenecked on accurate computer simulations? It's pretty speculative. AI algorithms like AlphaFold are making huge advances there but it's not at all clear what an unlock that will be.

*four spikes are: Shor's algorithm (breaks asymmetric encryption, legitimately a big deal for the NSA), Grover's algorithm (general-purpose n^2 speedup for "try all paths at once" [NP] sort of problems, which sounds great but in practice likely won't be practical), quantum annealing (imo basically useless) and simulating other quantum systems like small chemicals (which is cool BUT I my guess is chemical simulations are not much of an economic bottleneck [and to the extent chemical simulations are economically valuable, most of those problems could be solved by modern AI algorithms in the vein of AlphaFold]).

(I'm in Taipei if you want to ask someone to ask questions about this over tea, though LLMs are much better than me at this point)

Zach is clearly a quantum optimist. Building a quantum computer requires solving the decoherence problem, which is so difficult that it seems extremely unlikely in the foreseeable future.

From the perspective of the US government, it still makes sense to invest since even a small chance of success could be transformative. But it is frustrating to think that this funding will most likely not pay off.