RAND's Matheny on AI, X-Risk, & Bureaucracy

“We underestimate the peculiar effects of bureaucracies on decision-making.”

Jason Matheny recently passed his first anniversary as CEO of the RAND Corporation. This is part two of my nearly two-hour conversation with him — one of my favorite this year.

Matheny has held senior leadership roles in national security and technology policy at the White House, the Center for Security and Emerging Technology, and the Intelligence Advanced Research Projects Activity.

In today’s excerpt, we discuss:

RAND’s tradition of methodological innovation in the shadow of catastrophe.

Why biosecurity risks are so prevalent … and likely to escalate.

How bureaucratic politics can lead to insane decisions and existential risks.

This interview took place in June 2023 and is brought to you by the Andrew Marshall Foundation and the Hudson Institute’s Center for Defense Concepts and Technology. They’ve generously sponsored a ChinaTalk series on the role of technology in shaping bureaucracies and operational concepts.

Methods of Madness

Jordan Schneider: RAND made a lot of methodological innovations during the early Cold War. These efforts were downstream from a larger purpose — trying to stop a nuclear holocaust.

It’s not shocking they developed interesting theories on strategic bombing or nuclear weapons. But they also came up with more foundational innovations in modeling and computer science so they could construct those theories.

Given the specter of existential risk today, how is RAND continuing its tradition of methodological innovation?

Jason Matheny: Our current work on decision-making under deep uncertainty falls into that category of methodological innovation. It’s really important work.

This research program contrasts with work that I had been involved with earlier on generating precise probabilistic forecasts of events. This is sort of the other side of that.

Let’s say that you can’t produce precise probabilistic forecasts, and there are a lot of important problems in that category. How do you make decisions that are more robust? There’s a long line of research, as you note, treating methodology as a tool that supports high-consequence decision-making.

The Delphi method would be an example of this. It’s still one of the most useful and probably underused tools for judgment and decision-making. It’s a way of eliciting forecasts or probability judgments and combining them in ways that are less prone to groupthink and various kinds of biases. That type of work continues at RAND.

I’m really interested in building on this. Are there other tools and processes that can advance analysis on the most consequential problems in policy that we’re not yet widely using? That could be crowdsourced forecasts, prediction markets, or prizes and bounties for finding analytic information outside of RAND.

Figuring out how to leverage the 8 billion brains outside of RAND seems pretty important. We’re just 2,000 people here. There’s a whole lot more knowledge and expertise outside the organization. How do we leverage that? How do we incentivize it?

Applying advances in large language models to analysis is also important — everything from doing literature reviews to figuring out how to elaborate on an outline or generate hypotheses.

Large language models are just a really incredible new research tool.

Jordan Schneider: I wanted to pitch you on making RAND the AI-for-social-science hub of the twenty-first century. Universities are probably a little too fragmented to make the sort of institutional bets that you’d need to really do this.

Jason Matheny:

We are working at RAND to make sure all our staff have exposure and training to large language models, that they think about the ways these models can help their work — not just on the research side, but also on the operations side.

We’re already seeing researchers start to lean on these tools heavily in their work. That’s only going to grow. As we see these tools become more capable, the number of applications — the ways in which they can boost analysis and serve as sort of an amplifier for the cognitive work that’s being done by researchers here — is going to grow.

It still requires error checking. It still requires a lot of editing and a lot of checking citations to make sure they’re real citations and not hallucinations. But we’ve already seen the accuracy improve just over the last few months. We’re seeing fewer of these hallucinated references.

We’re seeing improvements in the ability to summarize a document well, create an annotated bibliography, and find and synthesize work in a domain we’re not actually sure will be particularly relevant to our main analysis.

We wouldn’t necessarily want to spend weeks surveying and summarizing a tangential domain, but we want to get the gist to know whether it’s worth a deep dive. Being able to do that analytic triage is something for which these models are very helpful.

We’ll know within two to three years if someone whose mind is nimble enough can do the RAND multidisciplinary approach as an individual. Hopefully you’ll be able to set it up, even if you’re not a trained economist or sociologist.

You could ask RAND GPT, “As a RAND sociologist, what are the sorts of things I should be looking at?” It could give researchers the power to load new methodological approaches and pick and choose methods.

Jordan Schneider: If you had a year to personally run your own RAND research pod, what topic would you choose?

Jason Matheny: I’d choose something like a strategic look at how we can put guardrails on AI and synthetic biology, comparable to the guardrails we put on nuclear technologies that can either be used for peaceful nuclear energy or for producing nuclear weapons.

We’ve thought deeply about how we create those guardrails for nuclear technologies so that we can get the benefit and reduce the risk. What are comparable guardrails for AI and synthetic biology?

If we didn’t have to worry about catastrophic risks, I’d want to spend more time thinking about how to design and deploy mechanisms for making better analysis and better decisions. I really loved the Phil Tetlock and Barbara Meller’s tournament on judgments around geopolitical events. Doing more of that would be something I’d love to see.

Unknown Unknowns

Jordan Schneider: I want to come back to decision-making under deep uncertainty. I’m worried about it being a very difficult thing for the voting public or politicians to internalize and accept. How does RAND communicate difficult, nuanced research in a way that’s palatable for voters and policymakers?

Jason Matheny: I’ll give an example. We have a research effort at RAND on something we call “truth decay.” We’re trying to analyze the general trend toward the erosion of facts and evidence used in policy debates.

There have been different measures of political polarization over the last few decades. There’s a decline in the way facts and evidence are used in debating policy substance, particularly in Congress. We’ve been doing a lot of work to understand the drivers of this and its remedies.

Even asking the question can be politicized. RAND is pretty nonpartisan. I couldn’t speculate on the broad political views of most of my colleagues I interact with every day. It’s a point of pride for RAND. But in some cases, even asking the question about what’s happening to facts and evidence in policy debates is viewed as politicized.

With decision-making under deep uncertainty, you want to find a set of methods that can help you make robustly good decisions, even when you’re radically uncertain about key parameters.

One example of this is the timelines for different AI capabilities. We don’t really know because researchers themselves are divided.

It was pretty stunning when the “Statement on AI Risk” from the Center for AI Safety was published. I saw the greatest degree of unanimity that I’ve seen among researchers describing AI as an extinction risk.

If you go a bit deeper and ask about extinction risk timelines and specific scenarios we should be worried about, you’re going to see a much greater level of disagreement. Is it AI applied to the design of cyberweapons? AI applied to bioweapons? Is it disinformation attacks? Is it the misalignment of AI systems?

But to see most of the top 50 AI researchers in the world — most of the authors of the canonical AI papers over the last decade — agreeing on this general point about AI posing potentially such a severe risk, that’s the sort of thing we should probably be thinking about.

Jordan Schneider: But Jason … you’re the Phil Tetlock prediction markets guy. You know we can’t simply defer to expert political judgments!

Jason Matheny: The main finding from Philip Tetlock and Barbara Meller’s work in forecasting tournaments isn’t that expertise doesn’t matter. It’s that expertise tends to be distributed in ways that might not always be correlated with conventional markers.

We organized this large global geopolitical forecasting tournament at IARPA, the largest of its type. It involved tens of thousands of participants, eliciting millions of judgments. What was really striking is if you want to make really accurate forecasts, you should involve a lot of people and basically take the average.

If you want to do even a little bit better than the average, you can assign more weight to people who have been more accurate historically, or find groups of so-called “superforecasters” who have a track record of accuracy. But even just taking the unweighted average of a large pool of people is really a pretty great improvement, overtaking the group deliberation of a group of assessed experts.

If you look at some of the surveys that have been done on AI risks, it’s pretty sobering. Robust decision-making in this case means thinking about the kinds of policy interventions that would help on different timescales, even if we don’t have a great detailed sense of what the specific risks are.

For example, are there policy guardrails that can help reduce AI risk, even if you don’t have enough details about the specific risks? Some suggestions might include improving red teaming and safety testing, investing in safety research or lab security, and thinking carefully about open-sourcing, because that’s something you can’t really take back if you detect some significant security issue or safety issue after a code release.

There are a lot of these robust approaches to policy that are helped by the decision-making under deep uncertainty approach — cases where we don’t have precise probability forecasts.

Jordan Schneider: It just seems like people in general and politicians in particular have a hard time internalizing and accepting that the future is uncertain. But there are many possible futures, and we don’t know how things like US-China competition or AI and employment will play out.

Recent congressional hearings on AI seem to follow a pattern we saw with social media. It’s very linear framing. Folks are not internalizing the potential for exponential increase in the power of these platforms.

Jason Matheny: You’re right. There is a general challenge in appreciating exponentials, even just in life. It’s just hard for all of us to see if we’re actually part of a hockey stick curve on an exponential, because it doesn’t look that way at first.

We’re just not good at grasping that. Our brains didn’t evolve to be able to understand changes that happen this rapidly. We see this not just in AI policy, but also in policy around synthetic biology — something that has its own version of Moore’s law happening right now, its own exponential improvements and effects.

We saw during the early part of the pandemic that it's hard to appreciate the doubling times when you have infections that spread, self-replication that is increasing exponentially. We still think linearly about this. We sort of think, “Well, we’ve got weeks to work this out.” But we’ve really only got days at the early part of a pandemic.

It’s the same thing with AI, cyber, and bio. They share this general property of self-replication and potentially self-modification. That makes policymaking especially challenging.

Life Finds a Way

Jordan Schneider: Biosecurity risks don’t seem to resonate with people as much, even though we just had a pandemic. How can we get voters and policymakers to think more deeply about this area?

Jason Matheny: Concern about this goes back decades. You had folks sounding the alarm on our vulnerability to infectious diseases going back even before the signing of the Biological Weapons Convention.

This is part of the point that Matt Meselson was making to Kissinger and Nixon for why we needed a biological weapons convention. We’re a highly networked society. We’re generally contrarian, so we don’t necessarily follow public health advice quickly as a society. We might be asymmetrically vulnerable to infectious diseases, whether natural, accidental, or intentional.

What’s striking is that — despite decades of prominent biologists, public health specialists, and clinicians sounding the alarm on our vulnerability — we have not invested in the things that are going to make the biggest difference for resilience and mitigation of pandemic risk.

Even after having over a million Americans die in a pandemic, we still have not made the kinds of investments that are going to meaningfully reduce the likelihood of future pandemics.

There is a pandemic fatigue right now in the policy community. Much was spent on addressing the economic impact of the pandemic. Some are saying, “Aren’t we done with this as a topic? Can’t we move on?” Of course we can’t, because the probability of something at least as severe as COVID happening again is non-negligible.

We’ve seen how costly it can be. Over a million deaths and over $10 trillion of economic damage — and this was a moderate pandemic with an infection-fatality rate of less than one percent.

We know of pathogens with infection-fatality rates that are much higher. Some are as high as 99%. We face the prospect of highly lethal, highly transmissible viruses with longer presymptomatic periods of transmission, something that makes viruses particularly dangerous.

Those characteristics now are amenable to engineering because of advances in synthetic biology. The risks of an engineered pandemic, whether intentional or accidental, have only grown.

The 1918 influenza, for example, killed somewhere between 50 to 100 million people in twelve months. It’s not clear that we would be any more capable of preventing such a pandemic today.

RAND on China

Jordan Schneider: You mentioned recently that you were excited about potentially setting up an analytic effort that takes a multidisciplinary approach to understanding China. You only have a dozen or so deep China experts on staff. How can RAND repeat its Cold War success in Soviet studies when it comes to China analysis today?

Jason Matheny: We did the math on this recently as part of an assessment of our China work. We have closer to about thirty China specialists at RAND. I still think it’s too small.

At the height of the Soviet studies program at RAND, we had a little under 100 Soviet specialists. We need something of that scale for China.

China studies has to be an interdisciplinary research agenda. It has to involve political scientists, economists, statisticians, linguists, sociologists, and technologists. RAND has comparative advantages here because of our scale and our disciplinary breadth. Having 30 China specialists might already make us the largest effort focused on China, especially in terms of military and economic analysis. But we need to be doing more.

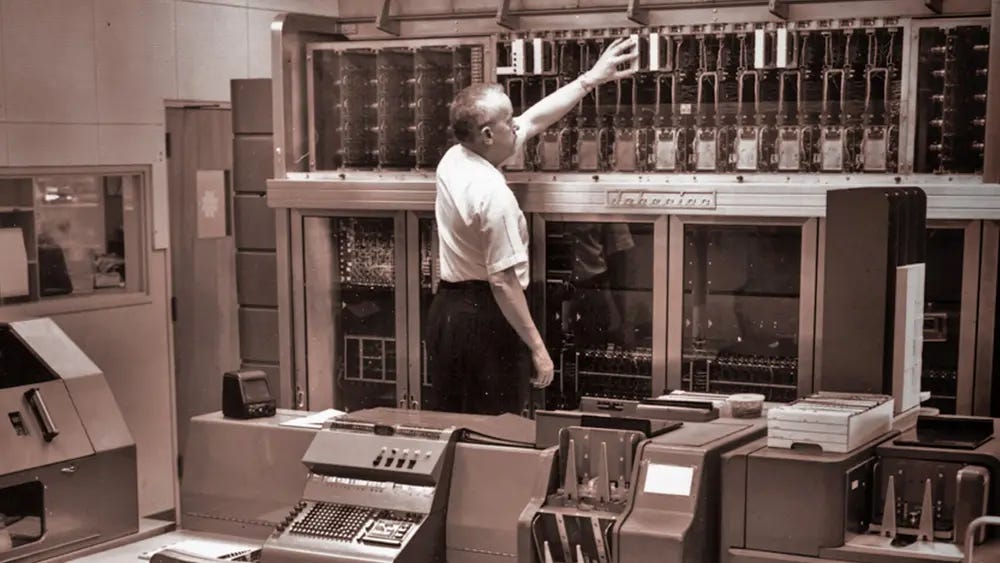

The Soviet studies program at RAND is an interesting model. We had what was then the largest analytic effort in the US.

A large-scale effort with demographers, economists, and linguists focused on things like China’s economy, industrial policy, bureaucratic process — really understanding China’s internal decision-making and closely analyzing the type of advice Xi Jinping is probably receiving and the biases embedded within that process — that is going to be really vital.

We need to be doing more of that. We have some of the essential resources for doing that at RAND. We need to build the others.

Jordan Schneider: What are the limiting factors currently?

Jason Matheny:

One of them is flexible funding. We do a lot of military analysis of China. There is much less federal funding for understanding China’s bureaucracy.

The US Intelligence Community was slow to pivot toward China as an increased focus of analysis. Even within the China portfolio, really thinking about bureaucracy as a subject of intelligence analysis has been slow. Science and technology analysis has been faster, although not as fast as you or I might like.

But really understanding the forms of decision-making — the potential failure nodes in decision-making within Xi’s inner circle — is something really important for us to understand.

Where is Xi getting information about emerging threats and emerging risks? How is that balanced across bureaucratic politics within the PRC? How does it shape the mix of decisions on new technology deployment or Taiwan timelines? Increasing our clarity around those questions is going to be really important.

These are the kinds of questions that are harder to get government funding for. They often seem more abstract. Do we really need sociologists and political scientists trying to analyze the PRC bureaucracy?

Probably the most important work RAND did in Soviet studies during the Cold War was actually focused on these questions. How does the Kremlin actually make decisions? What are its red lines? What are the risks of escalation emerging from this strange bureaucratic politics? These are important.

By default, the research on bureaucratic politics is understudied and underfunded.

Reading Leviathan’s Entrails

Jordan Schneider: The Operational Code of the Politburo, the 1951 classic by Nathan Leites, is worth revisiting.

Books like that on China are the ones I like most. Researchers just interview 200 officials or so and try to piece together a little corner of how the Chinese party-state works.

But I don’t know. Only about one comes out every year, which is really depressing. It’s only going to get harder, if not impossible, to do that research.

Jason Matheny: That’s right. I’m worried our data sources are dwindling.

The analysis of bureaucracies is really important for thinking about strategy.

That’s one thing I really appreciated about Andy Marshall after he moved from RAND to run the Office of Net Assessment at the Pentagon. Thinking about bureaucracies as an important variable in decision-making was a common analytic line of effort that Andy pursued. It really influenced a lot of his thinking about the risks of nuclear policy, for example.

He was accustomed to thinking about how nasty things can get in the world, not because of rational strategies, but because of different kinds of bureaucratic pressures that happen within governments.

A lot of national security thinkers will say it wouldn’t be rational for a state program to do X or launch a nuclear attack or release a biological agent that could have blowback on its own population.

Andy Marshall believed we regularly underestimate the risk and pain tolerance of states and political leaders, especially autocrats. We underestimate the peculiar effects of bureaucracies on decision-making.

Take biological weapons as an example. The Soviet Union had a very large biological weapons program. Each of the laboratories in the program had their own interests in expanding programs. They created prestige. They created job security. They created a lot of advantages for those bureaucrats who could get promotions.

In order to do all that, you need to give scientists interesting problems to work on so your lab can attract the best scientists. You’ve got bragging rights then.

Some of the world-ending pathogens the Soviet biological weapons program worked on weren’t really the product of strategic analysis. Rather, they were something bureaucrats could brag about.

They were things that would be technically sweet for the biologists to work on. If you want to bring together a bunch of smart biologists and say, “Build nasty weapons,” it can be a pretty irresistible intellectual property to have this modified pox virus.

So, they end up with this arsenal that doesn’t make any strategic sense. It only makes sense given the sociology of bureaucracies and technical communities.

More sociological analysis of these communities could end up being really important for what happens in the next few decades. It’s really understudied.

Jordan Schneider: You have folks like Dr. Ken Alibek, who led a bio lab in the Soviet Union, talking about why the USSR, on the one hand, was helping to rid the world of smallpox while at the same time creating the killer smallpox that could end humanity.

When we’re talking about smallpox, plague, or biological weapons, they’d be used in total war.

The rationale which allowed the downstream bureaucrats to flourish was that they understood the US to be evil. “They want to destroy our country, so we need to do everything in our power to create very sophisticated, powerful weapons to protect our country.”

Once you have that premise, all the other stuff follows. It’s scary. We’re entering a timeline when that type of logic ends up having a weight it hasn’t really had for the past thirty years.

Jason Matheny: The risks of biological weapons being used intentionally or accidentally is increasing. We’ve seen lab accidents and biological weapons labs before that were close calls.

The 1979 Sverdlovsk anthrax leak was a close call. It was anthrax rather than smallpox. But the Soviet Union was developing smallpox that was resistant to vaccines and antivirals.

There’s lots of nastiness, unfortunately, in biological weapons programs that exist today — in labs that exist today that are making these sorts of weapons.

We underestimate the risk of either a lab accident or a miscalculation by either a desperate leader — maybe an autocrat who’s getting bad advice — or by an insider within a lab who decides to use this pathogen because of some nationalist fervor.

It’s a place within the security landscape that is just filled with risk.

Below is the final section of our interview, where we get into:

Why policymakers need more Tetlockian knowledge on human judgment and decision-making.

The upsides and downsides to tech moonshots, and why OpenAI leads the pack.

Jason’s White House years, and his takeaways on the non-stop S&T policy-making.

How art informs our thinking on tech and public policy.