Software Abundance for Government

Can AI Rebuild the Administrative State?

Russell Kaplan, co-founder of Cognition — the company behind Devin — and previously at Scale AI and Tesla, joins the podcast to discuss what “software abundance” could mean for government.

Our conversation covers…

Why government software is so broken — Despite spending over $100B annually on IT, critical systems at agencies like the Social Security Administration and U.S. Department of the Treasury still run on decades-old code that few engineers know how to modify.

How two-year software projects become three-week ones — why AI agents are particularly good at the painful migration and modernization work engineers tend to avoid.

What “software abundance” actually means — AI agents can handle the tedious work of switching systems 24/7, collapsing the switching costs, and forcing software vendors to compete on value rather than locking customers into outdated systems.

AI for cybersecurity — From triaging massive vulnerability backlogs to automatically fixing CVEs, AI will be essential for defending critical infrastructure as attackers gain the same tools.

The coming “post-coding” world — As models converge in capability, the key bottleneck shifts from writing code to understanding problems, reviewing systems, and deciding what should be built in the first place.

Plus, the future of procurement in an AI world, fraud detection in government datasets, the DMV as a software problem, and why Kaplan thinks the real skill of the future is knowing which problems matter.

Listen now on your favorite podcast app.

Thanks so much to Cognition for sponsoring this episode.

Why Government Software is Bad

Jordan Schneider: What is wrong with software in government?

Russell Kaplan: We have a lot of problems with software in government, despite the government actually being the source of much software innovation for a long time. Today, the state of the world is pretty sad.

To put some numbers on it, more than $100 billion a year is spent on IT for the US government. A lot of these systems are ancient. The GAO did a study finding that in the 2010s, there were 10 critical legacy systems that needed modernization. Only three of them have even started the process. As a country, we’re spending a lot of money and not getting the same results that we see in the private sector.

What’s happening now with AI and software engineering is changing the private sector, but I’m personally really excited about how much it could change for the country as well. It’s actually really important for the next generation of the United States to get this right.

Jordan Schneider: You mentioned the $100 billion a year number — what does one dollar get you in the private sector, and how does that compare to some federal or state department spending that money?

Russell Kaplan: In the private sector, the way we buy software is — we have a problem, we see what’s the best tool on the market for that problem, and we buy it, whether it’s a SaaS solution for my CRM or infrastructure for scaling my database. The market tends to be more efficient.

For the government, it’s a different story. It’s really challenging for the government to purchase software directly. There’s a much higher compliance and regulatory hurdle for software vendors to even start working with the government. We faced this at Cognition — getting to FedRAMP High was a journey. But even once you’re there, there’s a lot of indirection. Many of these systems were designed with good intent — making sure there’s no corruption, that RFP processes let government buyers get the best price. But the net result is that it’s enormously slow to get software into the government, and in particular to reuse software. A SaaS tool has a much easier time being bought by a private sector company versus a government agency, which often needs a much higher degree of ownership of the product they’re using.

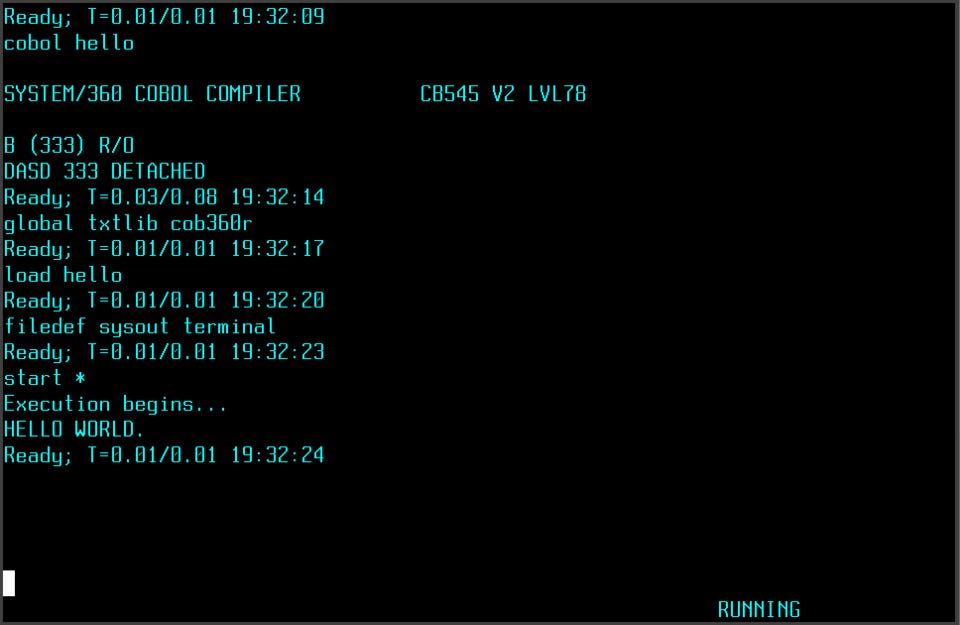

The net result is we’re still powering most of the country’s critical systems with ancient code. Tens of millions of lines of COBOL run our Treasury, our Social Security Administration — and it’s not getting better.

Jordan Schneider: Is COBOL not Lindy? What’s wrong with running a government on ancient software languages?

Russell Kaplan: The problem is that nobody knows how to write COBOL anymore. The people who wrote these systems are often no longer there when changes need to be made. There’s a small cohort of specialists who learned COBOL decades ago, still write it, and need to be brought in for any change — but there are fewer and fewer of them, and the changes get bigger and bigger. As a result, everyone’s scared to touch the big mainframe systems powering critical infrastructure for the country.

This problem exists in the private sector too. A lot of banks we work with at Cognition, large health insurers, airlines — they’re running these large-scale systems. To give COBOL credit, it’s a very performant language, really efficient and fast. It’s working, so people don’t want to mess with it. The problem arises when requirements change — it’s really hard to move with those requirements, to update them. That’s where the slowdown comes.

Jordan Schneider: For the uninitiated, why are there new programming languages, and what do they enable beyond just having more people who know Python versus stuff invented in the ’60s and ’70s?

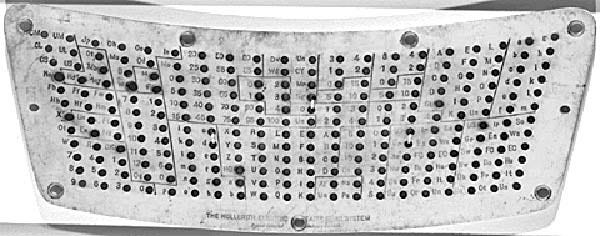

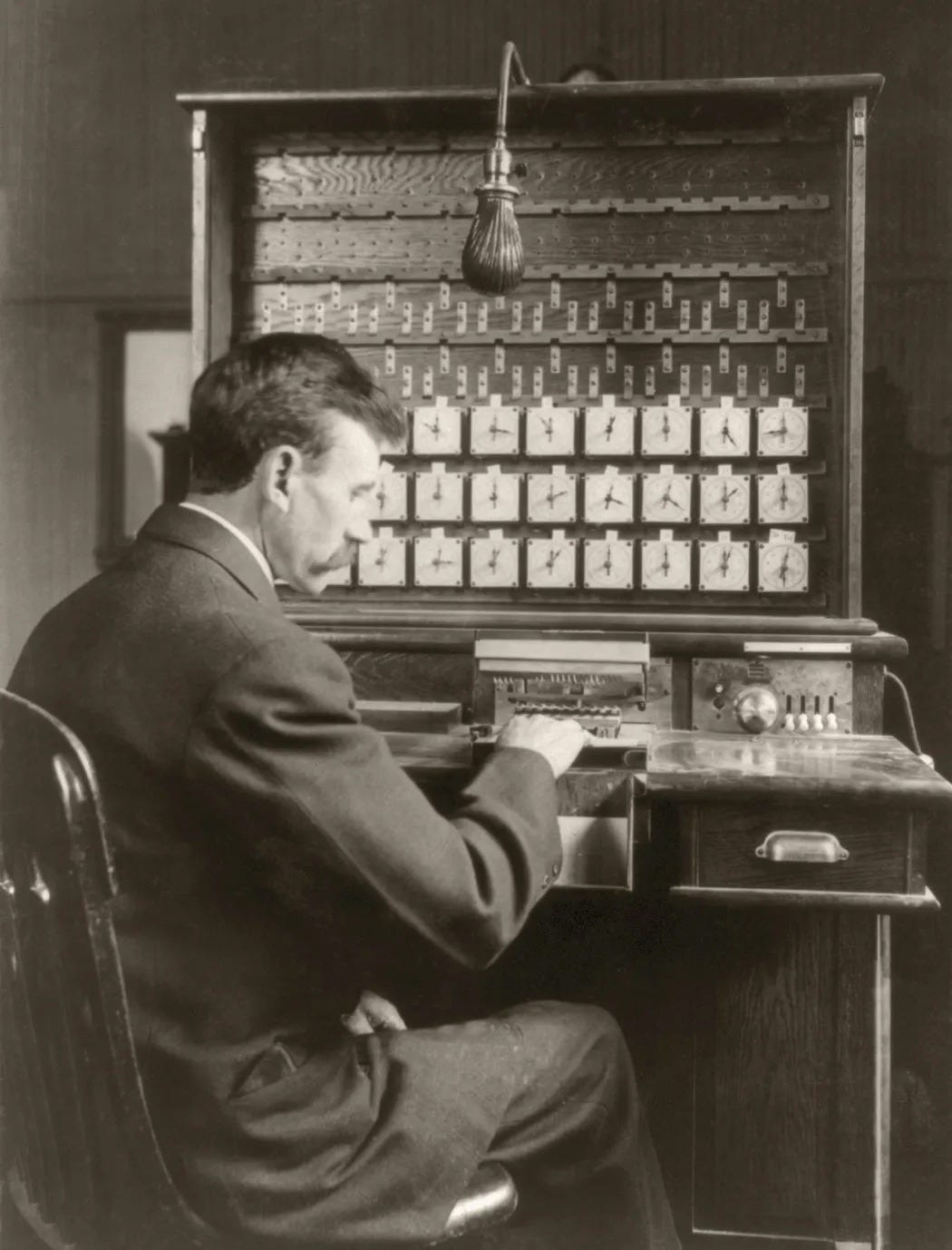

Russell Kaplan: A brief history of programming languages — even before COBOL, people were writing assembly. In 1948, assembly became popularized, which was a big upgrade from the previous era of punch cards. The 1890 census was the first time punch cards were used in a real production setting. The government realized that counting the census manually was going to take more than 10 years, so they literally weren’t going to get the job done. They put out a call for technology, and in 1890, punch cards solved the problem.

Jordan Schneider: That 1880s baby boom — the straw that broke the camel’s back.

Russell Kaplan: It really was. Too many people, not enough counters. It was going to take 12 or 13 years doing it the old-fashioned way. Punch cards are a very simple representation — a hole or not a hole representing a 1 or a 0 as a data storage format. Assembly, COBOL, and modern languages like Python and Java all walk up the ladder of abstraction, making it easier to tell your computer what you want it to do. You need increasingly less arcane specialized knowledge and increasingly more intuitive interfaces. AI is actually the next logical rung on the ladder. It’s not some fundamentally structurally different thing when it comes to programming — it’s telling your computer what you want it to do, but in English, in a way that’s natural for everyone.

Jordan Schneider: The older programming languages were optimizing for the constraints of their particular generation of technology — more severe memory, storage, and processing restrictions. In today’s languages, pre-2025, you still needed a person to sit down and write every line of code. That’s not really a thing so much anymore.

Russell Kaplan: The hardware teams work so hard to optimize the chips, to keep pushing Moore’s Law. And then lazy software engineers like myself stop worrying about garbage collection and memory management and relish the productivity gains without worrying about efficiencies. We do get more efficient, but typically most of the hardware performance gains are captured by making software easier to write.

One thing relevant for both government and the private sector — AI might flip this, where it’ll be able to write really optimized assembly or binary directly because it doesn’t need the intermediary interface that a human can understand.

The Coming Age of Software Abundance

Jordan Schneider: Beyond insanely performant code, what else can we expect in a world of software abundance?

Russell Kaplan: The most important thing is that software is going to start flowing more like water — easy to move around, easy to change, easy to get more of and a lot more will be created as a result.

If you look at the structure of the SaaS industry and software as deployed in government and private sector, a lot of how things are shaped is because of how hard it is to change things. Migrating off of a database you’ve installed and designed is a massive project. If you want to buy another company, one of the most complex parts has historically been integrating the software, infrastructure, IT systems, and data storage. There’s sprawling complexity, and a lot of vendors use switching costs to build a moat around their businesses — they land the contract, set up, discount the first year, then make it impossible to leave.

A big structural change is about to happen in the economy, and you can already see some reactions in the public markets. That strategy doesn’t work anymore. You can’t hold your customers hostage with switching costs when AI is going to do the switching and work on it 24 hours a day without getting bored of a really tedious process. The ability to move from whatever you have to the best tool for your problem is going to lead to a lot of changes.

Jordan Schneider: What’s Cognition doing to make that future possible?

Russell Kaplan: We started in January 2024 — about two years old at the time we’re recording. We began as a research lab focused on reasoning and long-term planning for software engineering. At the time, there was great progress on chatbots, but what about making things that could think for a really long period and apply that to software engineering?

We launched Devin, the AI software engineer, in March 2024. That was the first real draft of what an autonomous agent should look like — more like a digital coworker you delegate work to, as opposed to a copilot. Now that concept is extremely popular in software.

When you think about the complexity of switching costs, migrating, and modernizing. There’s an architecture part — deciding what’s wrong with the status quo and where to go — which is still done by humans. But once that decision is made, the execution is often toilsome — paying down tech debt, refactoring file after file of old code. That’s the stuff engineers really don’t love to do.

Cognition provides Devin as an AI software engineer that people can deploy against their code to quickly transform it, improve it, modernize it, upgrade it. At this point we’re used by a lot of the Fortune 500, by global organizations, really focused on large complex systems that require serious amounts of existing context to make useful changes.

Jordan Schneider: Let’s do the compare and contrast with Claude Code.

Russell Kaplan: By the way, I think Claude Code is awesome. The explosion of developer tools in AI and software engineering has been crazy to see — not just Claude Code, but Codex, other IDEs, CLIs. The interface is constantly changing.

Where Cognition sits is we have a platform, we have an IDE — we acquired Windsurf, the agentic IDE, in 2025 — and we built Devin, the autonomous agent. The biggest difference between Devin and Claude Code is really whether you’re running in the cloud or locally. Can you spin up the agent in parallel in a fleet, or is it running on your machine? It’s a fundamental architectural difference — do you give the agent its own computer?

The way we work with companies is also pretty different. We’re less of a “here’s the tool, go figure it out” approach. We work with the largest, most complex organizations in the world. These folks don’t just have a developer tools problem — they often have a transformation problem. How do I get this major outcome done in 3 months instead of 2 years? We’ve built a large forward-deployed engineering team for our size of company, and we go work with the government and enterprises to partner together on driving meaningful outcomes.

Jordan Schneider: Why do they need that, and not just the tools? Or do we wait 6 months or a year until the technology is so good that all we need is a model to go fix everything for us?

Russell Kaplan: That’s the AGI maximalist case. If we just have the best possible model, shouldn’t everything else just happen? The answer is no.

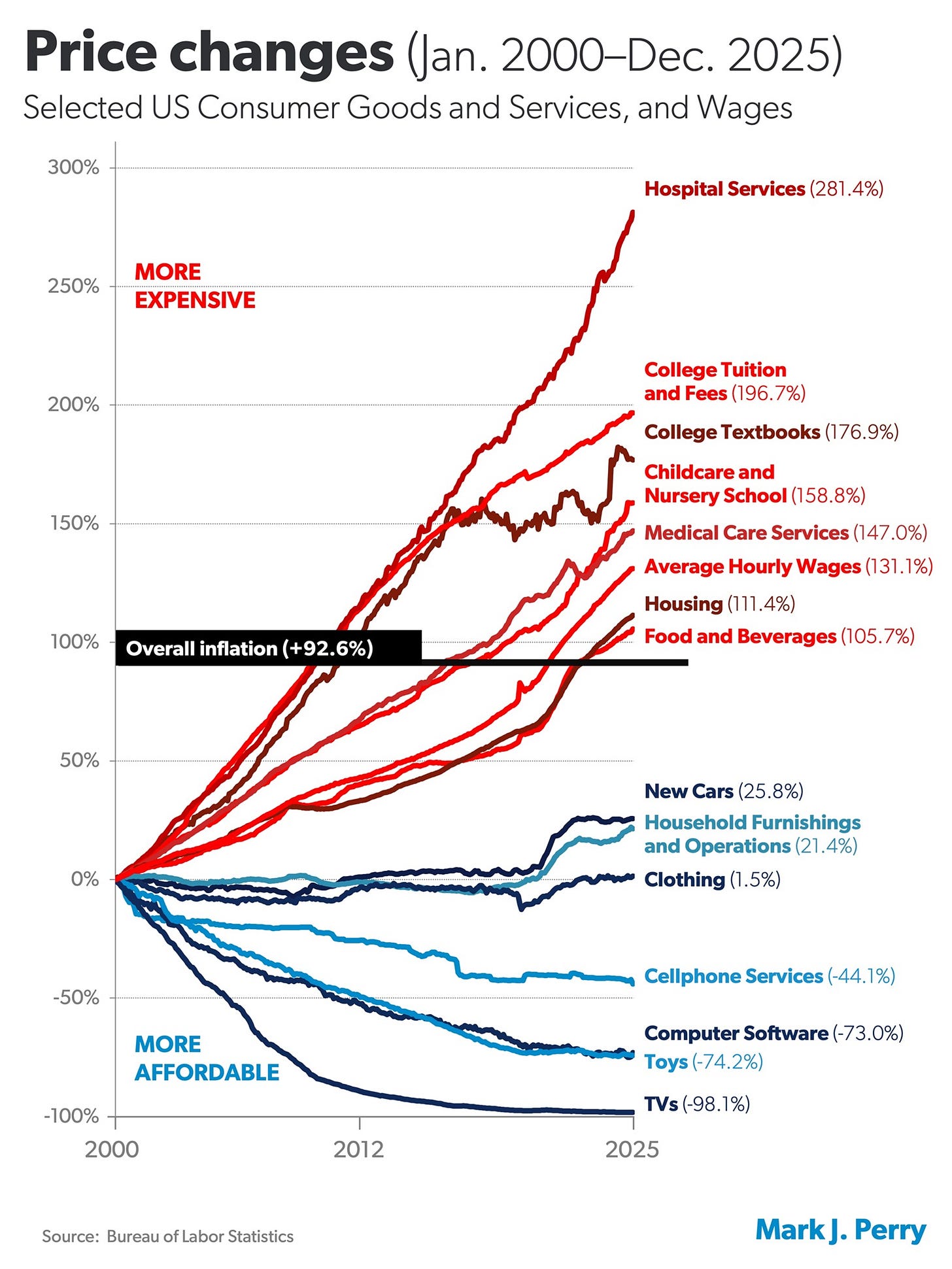

Have you seen the chart of inflation by sector over time where plasma screen TVs are massively deflationary, but healthcare and tuition keep going up? That chart is my mental model for the post-AGI future. All of the things that are intelligence-soluble get really deflated. But what you’re left with is the rest of the complexity of the real world, which is actually quite substantial. How are you even allowed to deploy in the environments you need? How do you work with the people who are ultimately in charge of these systems to drive the outcomes they want? How do you reframe and restructure the process of how technology is built or procured inside an organization?

The models are going to keep getting better and make software easier and easier to create. It’s all the other problems that get left behind.

Jordan Schneider: We’re recording this the afternoon of Friday, February 27th. There are two and a half hours left before Pete Hegseth drops the anvil on Anthropic, apparently. Given that Devin can pull from all the different models, what challenges and opportunities does that give you from a product development perspective?

Russell Kaplan: If you’re the DoD, you’re certainly frustrated and worried about the decisions of any one model provider affecting your mission. Every private company has the right to say, these are the use cases we want to serve and these are the ones we don’t. Kudos to Anthropic for stating clearly what they want to do and not do.

But it raises the question — should model providers even be building the vertical tools on top? Is that the best experience for customers? If anything, we see the opposite — differentiation among models is decreasing, not increasing, over time. Frontier eval scores for software engineering benchmarks show the gap between the best models right now is less than half what it was 12 months ago. As companies spend billions and tens of billions of dollars on bigger clusters and bigger models, the models themselves are converging.

If you’re a government buyer, you typically care more about the outcome you’re driving than which model you’re using. In some ways, this gives a structural advantage to the agent labs — Cognition being one — because we’re focused on the customer problem. No matter what models exist or don’t exist, we’re going to combine them in the best way. We’ll have our own specialized models for very specific, narrow use cases, but the goal is to drive the outcome you want.

Jordan Schneider: We have a running gag on China Talk about the AI mandate of heaven. Even though it’s been Anthropic’s for a hot minute, listeners will recall the world in which it was Gemini’s and OpenAI’s. I hear you on models converging in capabilities, but when I play with them, they do feel different — people talk about being better at this or that thing for software. How do you guys figure out who to assign what work when we’re talking about Devin?

Russell Kaplan: On the mandate of heaven piece, these things are cyclical. One thing that’s interesting about software engineering in particular is that the right form factor for building software is constantly changing based on the underlying capabilities of the models.

When we launched Devin in March 2024, it was just at the edge of what was possible — having an agent you could truly delegate work to and come back. Honestly, it wasn’t even really useful for us for another 3 months after we built the prototype we shared with the world. It took about 3 months for us to use it enough internally that Devin became the number one contributor to Devin. Then there was another several-month lag before it became deployed in production settings useful for customers.

As models improve, the form factor for how to use them keeps changing. In coding, we went from tab completion — like pressing tab in a Word doc to get the next response, but in your code editor — to a local chat experience where you can chat with your codebase and ask questions, to local agents, to now increasingly autonomous agents you can delegate work to. The form factor might look completely different again 6 months from now. The mandate of heaven is probably going to keep changing based on who is first or best at the next form factor. Every new form factor is a new front to battle.

As for evaluating the models themselves, we built an internal comprehensive evaluation suite. The original draft was called “Junior Dev Eval” — could these models act like junior developers? We have a fork of it now that’s more like a “Senior Dev,” because the models keep getting better. We work with every lab. Before they release models, we run our evals, give them feedback, and say where they’re strong and weak and how they can improve. We have a great partnership with every lab on this. Many of them have told us we have the best private evaluation suite for agentic coding tasks that’s independent from a model provider.

We care a lot about evals because our customers want the best models. The other interesting data point — no matter what task you give, eval scores are consistently worse if you constrain the agent to one model versus letting it use multiple. The differences are real — whether it’s personality, macro context understanding, or details, these little differences add up.

Jordan Schneider: That’s really interesting. Is there a structural reason for that staying true forever? If we’re holding equal the distribution of AI researcher talent, and everyone has the same amount of chips across the 3 or 4 labs, what is the reason why things are spiky in one direction versus another?

Russell Kaplan: The structural equilibrium is one of model convergence — capabilities increasingly similar, basically similar levels of performance in every domain. So why would that happen?

First, there’s the scaling laws. It takes exponentially more cost for linear gains on any benchmark. At small scale, it’s easy for one firm to spend 100 times more than another — $1 million versus $100 million. But once you’re all spending hundreds of billions of dollars, it’s hard to get a multi-order-of-magnitude lead over your competitors.

There’s also the practical reality that non-competes are unenforceable in California, and people move from one lab to another all the time. The half-life of a proprietary algorithmic insight is probably about 3 months. Even within the labs, you have one person working at OpenAI and their partner working at Anthropic. The half-life of proprietary IP in Silicon Valley is short.

Once the models roughly converge, maybe some personality differences persist — not capabilities, but personality. But the last point relevant for every task — we have this mantra in Silicon Valley that “we always want more intelligence.” We’ve got to build clusters of compute in the galaxy to harvest energy from every star. There are use cases for ever-increasing intelligence. But this ignores the fact that for any given application domain, you often reach a threshold of intelligence saturation where it’s enough.

Today, if you said, “Let’s build a simple static frontend site for China Talk,” any frontier model would do that well. Once you’ve hit intelligence saturation for a given task, you don’t care which model you’re using — you care about whether it’s fast and cheap. Increasingly, more domains are going to see this intelligence saturation, at which point the model matters less and the interface, the experience, and how it drives outcomes end-to-end for your company or government organization matter more.

AI Agents for Legacy Systems and Cybersecurity

Jordan Schneider: Let’s talk about driving outcomes. Before we get to the government stuff, what are some enterprise case studies that illustrate what 2025–2026 models are capable of powering through Devin?

Russell Kaplan: The thing that’s surprised and impressed me most is the ability of large organizations to take autonomous agents and do massive multi-year projects in weeks or months.

Here’s an example. A law changed recently in Brazil requiring taxpayer ID numbers to become alphanumeric instead of purely numeric. Think of it as Brazil’s Y2K — it’s called the CNPJ migration. Every system in the country that tracks corporate taxpayer IDs had to go alphanumeric with a different, longer format. Banks, healthcare providers, government agencies — it’s a huge problem.

We work with the largest financial services organization in Brazil, called Itaú, and they had a 2-year plan to become compliant with this change. It involved upgrading COBOL mainframes, upgrading processing systems. Conceptually it’s not complicated, but when you have thousands of different systems that all interact in complex ways, it gets messy. They were able to use Devin to get the bulk of that project done in 3 weeks instead of 2 years, then clean up the edges however they wanted. Seeing multi-year projects collapse to multi-week timelines has been really impressive.

Jordan Schneider: Is that where we are today — the really painful migration stuff where it’s transposing A to B in a more modern and functional way? Is that the current sweet spot for AI and software?

Russell Kaplan: Anything you can validate automatically is a sweet spot. Here’s an example of why I’m even working on Cognition. Before this, I was at Scale AI, which provides data to the frontier labs. We were doing labeling at scale with human experts saying which model response was better, providing reinforcement learning with human feedback. It kept getting harder because every human response needs to be smarter than the model’s own intuition to provide useful signal to make the models better. As models improve, that gets really hard to scale. We were finding PhDs in chemistry and true domain subject matter experts in every niche in the world trying to eke out better performance from these models.

In software, there’s a big difference — you can just run the code, compile it, test it. If it works or doesn’t, that’s signal you can use for reinforcement learning. Every application — whether government or private sector — where you can build an automatic feedback loop, that’s the key enabler to success. Migrations are a good example because you can build tests to say, how should the system behave? Does the new system behave the same way as the old one?

Jordan Schneider: Can we talk CVE (common vulnerabilities and exposures) mitigation for a second?

Russell Kaplan: A lot of people are worried about security and AI, and the worries are real. People are using AI in ways they haven’t before, and attackers are discovering vulnerabilities in really novel ways that would have been hard to do manually. But the defenders are now fighting back with AI too.

We have great existing tooling for scanning and detecting vulnerabilities via traditional static analysis — SonarQube, Veracode, Snyk — anything that can scan code and say, what’s my risk surface area? The problem that emerged a few years ago was that you’d run those scans and get thousands of alerts, sometimes tens of thousands, sometimes hundreds of thousands or even millions at really large organizations. Large organizations today have hundreds of thousands of open alerts saying “this might be insecure here.” That’s terrifying, but there just aren’t enough people to read all of those and staff the team to fix them. There are more problems than people.

What we’re seeing with Devin is that this is a really well-suited use case. You’ve got tons of alerts, it’s toilsome, and they need to be triaged — AI can do the triaging quite well. Some of the largest financial services firms in the world apply Devin to every single vulnerability caught in their entire codebase before it even goes to a human. Devin tries to auto-remediate, and right now we’re at roughly 70% fully automatic remediation — the code change suggested by Devin can be accepted and approved in one click, no changes needed. That should only go up as models keep getting better.

Jordan Schneider: This is an important point. You’re not going to make critical infrastructure — whether a bank or a power plant — resilient to the degree you want, especially when AI is attacking on the other side and the cost of getting into these systems is decreasing. Your power plant or water treatment plant has had 30 years to hire software engineers to clean up this stuff and just hasn’t. The only way we get to a world with stronger defenses is something way cheaper than what the alternative has been for the past few decades. It’s cool that we’re at that point.

Russell Kaplan: Those systems don’t even need to be vulnerable to automatic AI infiltration to be at risk. On the attacker side, humans working with AI has made attackers much stronger.

There was a vulnerability a few months ago called React2shell — a 10 out of 10 critical vulnerability. You could essentially remote control any server by sending the right network requests to a very popular library. The attacker who found this used AI tools. In fact, a product we offer called DeepWiki — our codebase intelligence product, which we give away for free for every open source repo — was used by a good Samaritan researcher to find issues in this codebase and unlock novel exploits. One of the hard parts of being a security researcher is wrapping your head around all the code inside an existing system. When AI makes it easier to ask questions about that code and summarize it, the attackers get a lot of leverage.

Jordan Schneider: Let’s talk about the “understanding the codebase” dynamic, both in your legacy corporate clients and the government ones. Why is that such a challenge to upgrading them?

Russell Kaplan: Right now these models have context windows in the million-token range. You can throw in a million tokens and reason about that correctly. But a lot of real-world production systems in enterprise and government are much larger. You can have individual codebases that are hundreds of millions or billions of lines of code, thousands of systems plugging together in different ways.

We talk about how human engineers still need to understand what we’re doing — I would argue no human engineer actually understands what’s happening inside a large organization at this point. The complexity has already escaped the constraints of one person’s brain. There’s too much stuff, too interconnected, too hard. The same reason it’s challenging for people is also challenging for AI systems, especially given current model limitations. In the coming years, models should get better at handling bigger and more complex code.

A lot of our research at Cognition has focused specifically on large-scale codebase understanding. How do you take every disparate system and look at it together, reasoning about it coherently? It’s actually a mixture of deep learning and graph algorithms — building a high-level graph of the relationships between different parts of code and different systems in an organization that scales much, much higher.

Jordan Schneider: How do we go from a million-token context window to something that can actually understand what’s going on in our complicated Brazilian bank?

Russell Kaplan: Right now, you need more than models alone. We always want underlying base models to get better, and we train our own specialized models for specific tasks, but models alone are insufficient for very large-scale codebase understanding.

What we’ve found is that if you index everything — throw billions of lines of code and many different systems into structured, machine-learned representations of the key similarities and differences across services and their relationships — you can build a graph data structure that interconnects how everything works in much higher detail. Then you still use LLMs when zooming into a specific area to say, how do these pieces fit together and solve a problem?

This is a really important point. In AI and software — and other AI domains too — it’s much easier to make a new thing from scratch than to make changes to an existing thing. To make changes, you first have to understand why the thing is the way it is. That “why” might be decades of historical context. Some of it’s documented in the code, some might be written in a Confluence page somewhere, some might be in one guy’s head who left the organization 5 years ago. You have this enormous history that we have to respect when making changes to real-world systems.

Jordan Schneider: Social Security is perhaps the paradigmatic example. No administration wants to do anything to stop those checks going out. That, plus the census data being so finicky, ended up enabling hundreds of billions of dollars of fraud during the pandemic because there wasn’t a more modern system that would allow visibility into where those checks were going. Thoughts on that in the government context?

Russell Kaplan: Sunlight is the best disinfectant. It’s great that the government is starting to put out public datasets and saying, “Community, go find the fraud — we’re not even going to find it ourselves.” We actually assigned Devin to the recent large dataset release from HHS to find the fraudulent patterns. Very quickly, you can tell this is a task well suited for AI. There are anomalous movements of money, patterns that don’t add up relative to the distribution. You’re going to see a lot more of that — both government agencies using AI internally to fight fraud and sharing data externally to leverage the full community.

Software for State Capacity

Jordan Schneider: What are some of the dream projects? Where do you really want to stick Devin in the coming years?

Russell Kaplan: State capacity matters a lot to me, both as a citizen and as someone interested in the well-being of the United States. It’s great to see what our country is capable of at its best, but also frustrating to see what it’s hindered by at its worst. The incentive structure of how the private sector helps government, the way contracting works, the resulting lock-in and stickiness of suboptimal systems for long periods of time — it’s really frustrating. It affects us every time we go to the DMV.

I would love to see a future of high state capacity for software, where there’s not a big gap between your experience using software with the government and your experience using software in every other aspect of your life. The bits power the atoms — our interaction with the physical world is increasingly governed by software systems.

One of the things we’re trying to do at Cognition for Government is empower every agency to get where they want to go. It starts with modernization, which is the bottleneck for a lot of these problems. We work with a ton of agencies at this point — the Army, the Navy, the Treasury, NASA JPL. We have dozens of FedRAMP deployments now, and we’re just getting started. I’m really excited to help level the playing field between public sector and private sector.

Jordan Schneider: How has the experience been putting Devin in government versus financial systems or other enterprise companies?

Russell Kaplan: There are more parallels than you might expect. The largest health insurers in the world are also very sensitive to regulation and security. They also have enormously complex systems. There are actually more similarities than differences, which is one reason we decided relatively early in our company’s journey to go help the government too. It’s not a completely different set of problems. You have to work with your counterparties in different ways, but the underlying problems are pretty similar.

Jordan Schneider: What about if we’re talking about Stripe or Notion or some Silicon Valley firm?

Russell Kaplan: The Silicon Valley tech-native startups are really different. There’s a spectrum of buy versus build. What’s special about Silicon Valley is that companies are building things themselves — constantly shipping new things, making their own agents, reinventing themselves. Then you have companies whose core focus is not software. Their core focus is solving some other set of problems for their customers, citizens, or stakeholders, and software is just a tool to get the job done.

Historically, those non-native software organizations have been reliant on software vendors to bring them tools. If you play forward what’s going to happen with AI and software engineering, every company, every organization, every government agency is going to be in much greater control of its own destiny. Right now, software creation is extremely constrained. Everyone needs more engineering capacity than they have. The roadmap is long, things get cut and descoped all the time. That’s going to start to flip. The result might be that every company has the capabilities of a software company.

Jordan Schneider: What does the software-engineering-starved healthcare provider or federal bureaucracy actually need in order to taste the fruits of that future, besides a good procurement process for a little Devin?

Russell Kaplan: You joke about procurement, but the procurement process is actually one of the first beneficiaries of software abundance. People are talking about the “SaaS-pocalypse” right now — some aren’t joking. Some companies’ stocks are down 30% on this concept. The idea is overblown in some ways because we’re not all going to vibe code our own systems of record tomorrow. But the leverage has flipped, and procurement organizations are seeing the benefits.

One of our large Fortune 500 clients actually instituted a new procurement process with Devin. Before they buy any other software, they first prompt Devin to try to build the application. Devin isn’t going to one-shot build a giant company’s application in one go, but you can get a prototype. The procurement team then goes to the software vendor and says, “We want a discount.” It’s an effective negotiating tactic, and people are already getting discounts from this. In at least one case, it was an infrastructure provider, and the firm decided to actually build it internally because it wasn’t that hard and the prototype worked.

This is already happening in Q1 2026. It’s going to put pressure on software vendors to deliver value. That’s what I’m personally really excited about — less rent-seeking, more product quality.

Jordan Schneider: I also wonder about the question of how many really good people you need to get to “passable.” For the past decade or so we’ve had Code for America and various rotate-into-government-for-2-years programs. On the one hand, they do good work. On the other, maybe they make a nice frontend or fix one problem. But the ability for that one person to fix 10 or 50 problems in a 2-year cycle — these tools are going to give those folks a lot more leverage.

Russell Kaplan: We see that all the time. One of the fun things about software is that basically everyone always wants more of it. If you’re an individual engineer, you can ship a lot more than you used to, and you’re more empowered cross-functionally. You can get help with your designs from your agent, help scoping the product roadmap, help with integrations. Every person traditionally involved in building software is more empowered to have more ownership of the outcomes they’re driving. The product manager feels it too — “I can prototype this without the engineer or the designer.” The designer says, “I can build and scope this without either of them.”

The result is that you can get a lot more done with smaller teams, but organizations are also getting a lot more ambitious. The bulk of the change happening right now is people taking the productivity gains and asking, what more can we ship? What more can we pull in on the roadmap?

Jordan Schneider: From a policy perspective — and this is a drum I beat a lot — you need to use these tools even if you’re not a software engineer, because the possibility space of what you can do from a policy perspective is going to expand dramatically. The idea I came up with was dynamic pricing for the FAA to manage drone corridors — delivering packages, taking your kid home from daycare, whatever. Surge pricing for my daycare VTOLs. But that’s a big demand on software. In New York City right now we have this incredibly dumb version of surge pricing. It wasn’t necessarily because the software was complicated, but you can just have more creative, dynamic things because it will no longer be impossible to do what the equivalent of 10 FTEs building you a thing in 2024 or 2025 would have required. I’m excited for people to use their imagination when it comes to how to use this stuff better.

Russell Kaplan: For policymakers, it’s useful to implement your own policy ideas with these tools, but it’s also really important to build the mental model of what’s possible and what’s not. That mental model changes every month.

One of the greatest harms we did in generative AI was shipping Google’s auto-generated answers at the same time ChatGPT Pro existed. A lot of people were running a Google query on a cheap model served for free and thinking, “This AI answer is not very good.” Meanwhile, the $200 a month ChatGPT Pro subscription might give you research-grade quality. People were building very inaccurate mental models of what these systems are capable of. Everyone’s guilty of it, including people working on the tools. If you’re building the tools and not constantly testing the frontier, your mental model goes out of date really quickly.

Jordan Schneider: Not to give away your evals, but what are you hoping to see in the next few years?

Russell Kaplan: We’re heading to a world where building software is already no longer really about coding. Writing the code is not the bottleneck anymore — it’s everything around it. Humans still have to understand the code we’re putting into production, and the emerging bottleneck is actually review. We launched a product a month ago called Devin Review, a very human-centric interface for understanding increasingly AI-generated code. People are making changes that are thousands of lines long. The volume of code is growing enormously.

Where we are right now, Q1 2026, you’ve still got to understand the code you put into production. By 2028, that will no longer be true. We’ll have much broader specifications of systems — something more like writing a spec in English, and AI compiles the English spec down to software. But through 2026 and probably most of 2027, we’re still going to be looking at code, trying to understand it, and we’re not yet at the level of reliability where you can fully automate these things.

It reminds me a lot of self-driving. When I was at Tesla on the Autopilot team, working on the vision neural network — when you get to 99.9% reliability, a lot of drivers start really trusting the system because it works 999 out of every 1,000 times. That 1 in 1,000 where you have to take over, people pay less attention. We’re in that uncanny valley phase of AI software engineering where it works so well that you might be too trusting of it, but you’ve still got to understand what you’re doing.

Jordan Schneider: You mentioned the self-driving form factor earlier — at Level 5, you take a nap. That’s the clear end state. How do you think about what the next interaction paradigm is going to be?

Russell Kaplan: Self-driving is interesting because Level 5 is you take a nap, but that’s the limit — you still decided where you want to go. In software, there might be a Level 6, where you don’t even decide where you want to go. Maybe you have some very high-level objective for what you want to accomplish, but Level 6 autonomy means the AI agent actually decides the details of what to build in the first place. The level of abstraction people will operate on is going to grow really high, really unexpectedly fast. If you specify a business objective or an outcome you want, increasingly we’re going to be able to optimize against that objective directly.

Jordan Schneider: That’s funny, because that’s actually where I feel the limits most — the first question, idea generation, what direction to take some vague thing I have. The execution, the research, finding random stuff on the internet, building the MVP — that it can take care of. But can a model come up with the policy idea that fits into all the constraints we’re living in, or the right episode topic?

Russell Kaplan: Right now, it’s all about asking the right questions. That’s the key skill of using models — what question are you asking? What task are you trying to do? That’s a distinctly human activity that’s going to remain human for a long time. Even the way we’ve structured our society as a democracy — ultimately, we as people are in charge of what we want, the structure of society we want, how we want to push forward. These things are tools ultimately, tools for the betterment of society, but they’re getting much more capable and much more autonomous all the time.

Jordan Schneider: When you’re working with clients and your forward-deployed engineers, are they often squinting around saying, “You thought you wanted us to do A, but B and C is also something these models are capable of”? How much do you see Cognition serving the role of AI-to-problem-finder?

Russell Kaplan: That’s an area we help with a lot right now. Usually customers understand their problems, but they don’t necessarily have the best mental model of exactly the full universe of problems addressable with AI today. What’s really interesting about Devin and agents in general is that once you’re plugged into the code, you can see all the problems. The problem discovery process that used to take lots of conversations is getting increasingly automated — whether it’s the security vulnerabilities we talked about or something else.

A typical engagement for us — a government organization or large enterprise comes in and says they have 3 outcomes they want to achieve with AI. “We’re going to modernize this legacy system in weeks instead of years.” “We need to build this new capability as fast as possible and it’s going to grow our business by this much.” “We need to structurally improve our testing coverage, validation, and security posture — here are the metrics.”

What we find inside each organization is a really wide distribution of how much people are leaning into next-generation tools. In every organization — it doesn’t matter if it’s the most legacy, old-school organization in the world — some people are excited about the future and want to try new things. Consistently, 100% of the time. Those people are more empowered than ever to have extraordinary impact. There are also folks who’ve been doing it one way for 30 years and are super skeptical. The evidence is increasingly growing that it might be worth taking a peek.

Jobs, Talent, and Cognition for Government

Jordan Schneider: What are your calls to action? Who are you hiring for? What kind of conversations do you want to have coming out of this?

Russell Kaplan: We’re hiring a lot in Cognition for Government right now for folks who have been on the ground and seen the problems firsthand. Our forward-deployed engineering organization is maybe the fastest growing of all the roles.

People ask what the future of software engineering looks like. It might look like you always have to understand the problems of your customer, because writing code is getting easier and easier. If you look at our core research and engineering product team versus engineers who wear multiple hats — interacting with customers, shaping the product — the latter is growing much faster. In the limit, we all might be working directly with other people in some capacity.

We’re also growing what we call engagement management, because these projects are very rarely just about the software — it’s about the end-to-end organizational problems you’ve got to solve. We have classified deployments, we work in secret networks, so folks with the right clearances and backgrounds are always interesting. We’re really just scratching the surface of how much this is going to change.

Jordan Schneider: You also have some family lore to share.

Russell Kaplan: You were asking me why I was so interested in the 1890 census and how we popularized punch cards. My grandmother was one of the first female programmers in the country, back when it was a very arcane activity of messing with punch cards. Later, when assembly came out, she was super excited about that. She gave me a lot of crap growing up that we had it so easy in the 2020s — writing code with a computer you could edit, where you didn’t have to worry about dropping things.

Her master’s thesis was on the knapsack problem, and that line of research ended up being really useful in the Apollo missions. Part of my hope for Cognition for Government is that we can go full circle and help bring the government back to where it once was — the true leader in technology.

Jordan Schneider: What does she think about Devin?

Russell Kaplan: Unfortunately, she passed away a few years ago, before Devin came out. But I think she would look at it and be proud. I think she would be happy.

Jordan Schneider: It’s interesting how the genders flipped in software engineering — in the first few decades it was a very female-coded field, and then that changed. I wonder if all the AI tools are going to help it flip back. If the type of skills that get prioritized rearranges what the labor market looks like, you might not see the gender split that’s dominated for the past few decades.

Russell Kaplan: At a minimum, it’s going to be so accessible so early in your life to learn and use these tools that you might start building applications with AI before you even know what the concept of a gender norm is. Software will be like water, just flowing everywhere. It’s going to be a really fun time to be a builder.

Jordan Schneider: First, she’s got to learn how to speak, but maybe we’ll give my daughter 6 more months. Awesome, Russell. Thank you for that.