Making Money in Chinese AI Safety

Compliance-as-a-Service

If you want to publicly launch an AI product in China, you need to get on the government’s safety list. You can see all 6,000+ approved companies in plain sight (courtesy of Trivium: excel file). But what’s less clear is how to actually get registered. Some vendors claim they’ll do it for you. Others claim they can do much more.

Let’s take a closer look at the emerging marketplace for AI safety services in China.

We’ll begin with the cottage industry of online vendors promising to help companies navigate the filing process, then turn to the more formal third-party safety firms positioning safety as a full-fledged business model. Finally, we’ll examine how the West tends to frame safety and technological progress as opposing forces, a tension far less pronounced in China, before turning to what the rise of agentic AI could mean for the scale of China’s safety industry.

For Context

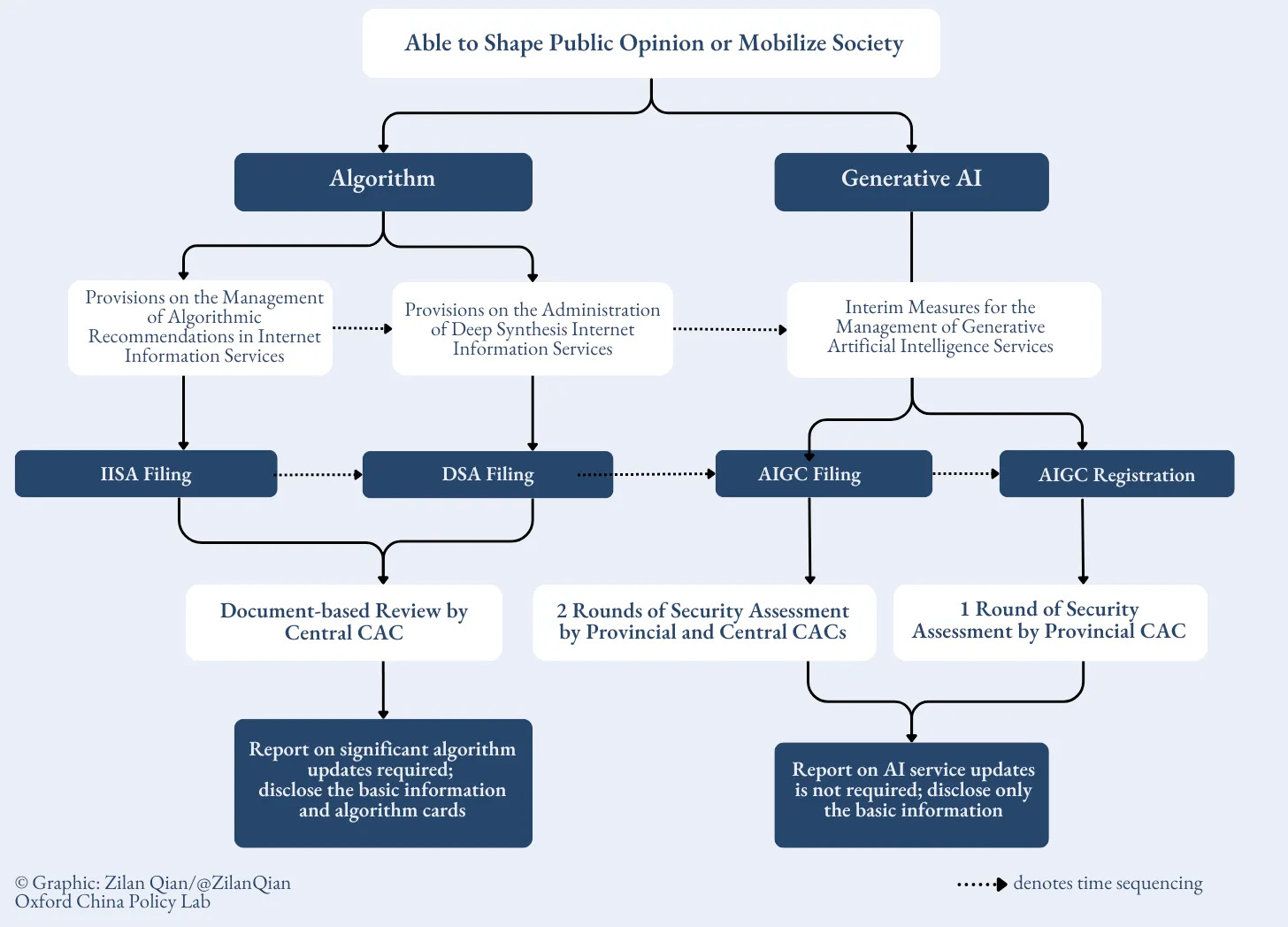

Any company deploying generative AI services with “public opinion attributes or social mobilization capabilities” has to file with the Cyberspace Administration of China (CAC). Before you scale to the public, regulators need to be satisfied that your model won’t say the wrong things or “violate core socialist values.” You have to make sure your model won’t claim Taiwan is an independent country or explain what happened in 1989 in Tiananmen Square.

There’s been good coverage on the registration process from the Oxford China Policy Lab, Wired, and Trivium.

The CAC publishes its list of registered companies periodically, with approved models from giants like Baidu and ByteDance to startups you’ve never heard of. What the CAC doesn’t clearly explain is how to actually get on that list.

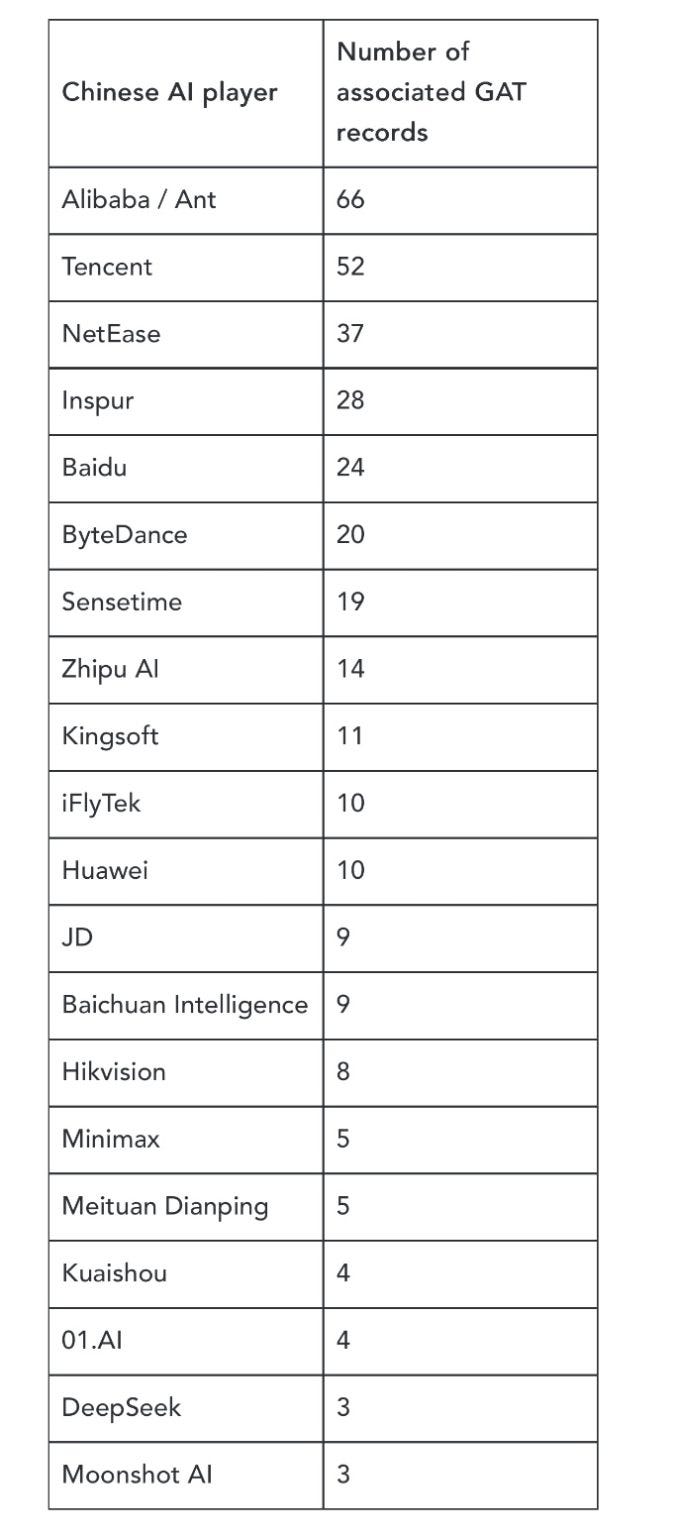

There have now been more than 6,000 filings. Alibaba alone has 67 products and algorithms registered. Inspur, a company I had admittedly barely heard of before this, shows up with 28 registrations. (Inspur is China’s largest server manufacturer and the world’s third-largest, specializing in AI servers and GPU systems that train large models!) Huawei has ten. DeepSeek has three.

The core requirements seem demanding: a 100+ page Safety Assessment Report detailing training data sources and security measures; a keyword interception list of at least 10,000 blocked terms covering 31 risk categories (political sensitivity, violence, discrimination, etc.), and the ability to appropriately answer a gauntlet of sensitive questions. According to the WSJ, this includes running a database of 20,000 to 70,000 questions testing whether the model answers appropriately, refuses the right questions, but also doesn’t over-refuse normal queries.

For large, well-resourced companies, I doubt the CAC requirements are a major inconvenience. But this got me thinking: a student startup building its first AI product faces the same regulatory requirements as Alibaba. The CAC doesn’t distinguish between billion-dollar incumbents and five-person founding teams; all these different players have to navigate the same compliance maze.

So how would a small team without regulatory expertise or deep pockets actually get through this?

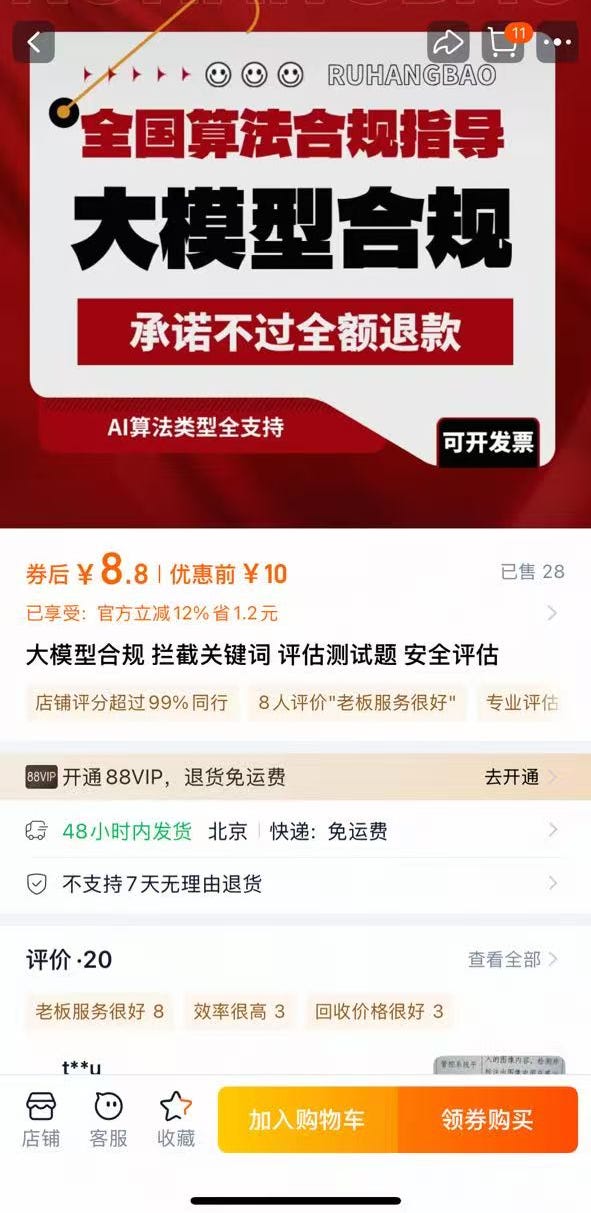

The Taobao Method

Search “AI evaluation testing” (AI评估测试) on Taobao 淘宝, China’s largest e-commerce website, and you’ll find a cottage industry of vendors advertising services that map onto CAC requirements. They rarely mention the CAC explicitly, but the implication is clear enough.

I messaged several of these sellers, posing as a Peking University student startup that had fine-tuned Alibaba’s Qwen model.

The first attempts didn’t go well. They immediately asked detailed technical questions about our product that I couldn’t answer, causing them to get skittish and cut off communication. But after Claude helped me concoct a more coherent story, I was able to move the conversations to WeChat, where we could discuss CAC filing more directly.

Prices varied and seemed negotiable. Filing for recommendation algorithms or content moderation systems was notably cheaper than for full AI-generated content (AIGC). For AIGC — the comprehensive safety assessment required for generative AI services with “public opinion attributes or social mobilization capabilities” — quotes ranged from ¥15,000 to ¥80,000 (roughly US$2,000–$11,000).

What these companies offer is essentially full-service compliance. You handle the technical work; they handle the paperwork and regulatory interface. As one vendor explained:

“We are responsible for writing the materials for the large-scale model registration, while you are responsible for optimizing the model. The writing period is 15 working days, and we complete the large-scale model registration materials. The CAC will review them for approximately 4 months, depending on the local review timeline. If the materials are ultimately rejected, the CAC will not accept them, and the large-scale model registration will not be approved, and we offer a full refund.”

Alternatively, instead of a refund, some vendors offer to revise the materials until they satisfy the review requirements. The risk, however, is time. An AIGC filing typically takes two to five months for review. If the application ultimately fails, you may have to restart the process, stretching the timeline possibly to a year before you can officially launch. In AI terms, that kind of delay can feel like an eternity, with your hot product today facing the risk of becoming obsolete.

When I asked whether we could just do it ourselves, I usually got this sort of response:

“The process is quite complex. You can try it yourself, but it will take time. In some industries, the required documentation can consist of over 500 pages.”

These vendors tried to imply that, unlike me, they had special information on how the CAC review process actually works: how to structure submissions, what common rejection points look like, and how to streamline back-and-forth with regulators.

After enough of these conversations, I realized that the financial and time costs of filing probably barely register for major AI labs. But imagining myself as part of a scrappy college startup, the process felt more daunting. Tens of thousands of yuan, not to mention months of review time, is not trivial when you are operating on a thin runway.

There is, however, the possibility of recouping some of that expense (Ch. 2, Section 3; p. 24 onwards). In many jurisdictions, successful CAC filing has become a prerequisite for accessing local government support programs. Cities and districts across China now tie model-registration status to one-time rewards, R&D reimbursements, compute subsidies, or model vouchers. In some cases, the headline figures run into the hundreds of thousands or even millions of RMB. For firms that qualify, the ¥15,000–¥80,000 spent on filing assistance can look less like a regulatory tax and more like a down payment on industrial policy eligibility.

But that support is far from automatic. Filing is usually a necessary condition, not a sufficient one. Many policies seem to apply only to first-time registrants, impose minimum parameter thresholds, cap annual payouts, require local incorporation, or distribute funds on a competitive, merit-based basis. Subsidies can meaningfully offset compliance costs, but they are neither guaranteed nor universally available. For smaller firms in particular, counting on government support to balance the books still looks like a gamble rather than a certainty.

Official Third-Party Safety Services

What if you’re a company that wants more than just a random online vendor to do your AI paperwork for you? A more formal layer of third-party firms has emerged in China to shepherd models through their safety/compliance journeys, and they do more than just help you pass the CAC requirements.

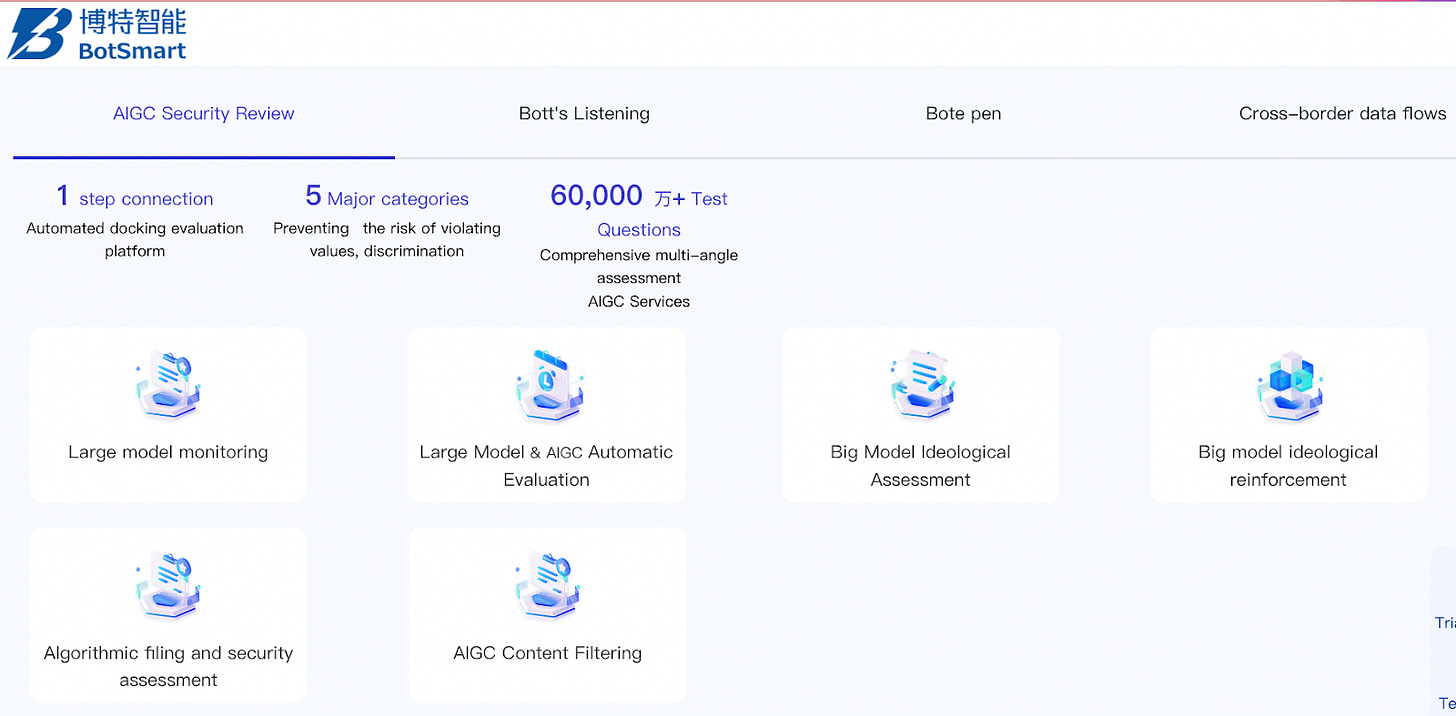

Firms like RealAI aren’t just trying to sell you on passing the CAC requirements, though they’ll do that for you if you ask. They also market end-to-end safety infrastructure: adversarial testing, robustness evaluation, content filtering, post-deployment monitoring, and broader controllable AI engineering. BotSmart (博特智能) bundles AIGC compliance with explicit “ideological alignment” testing and even deploys its own model to evaluate the outputs of other models.

Baidu, ByteDance, and NetEase have all built out similar offerings, often by expanding the scope of pre-existing cybersecurity products. Zhipu AI has publicly stated that it uses NetEase’s services for pre-deployment dangerous capability assessments (Appendix C of this pdf), and SenseTime has signed a cooperation agreement with RealAI (Page 57 of this pdf).

For the best round-up of this space, see the final section of Concordia AI’s State of AI Safety in China (2025) year-end report, “Safety as a Service.”

These firms appear to be more ‘safety-pilled’ than simple compliance shops. RealAI, for instance, often publishes high-quality papers on AI safety. Their services extend to interpretability research, AI’s moral point of view, and loss-of-control scenarios, not simply passing government tests. There’s no telling how many customers they have (I asked, and they wouldn’t tell), and these companies also have many other business streams completely unrelated to AI safety. (BotSmart sells an AI pen, which is a writing device that uses built-in sensors and artificial intelligence to perform tasks like translating text or digitizing handwriting.) But these companies are hoping the safety market will grow as AI becomes more transformative and more of a headache for the Chinese government.

The Market Dynamics for Safety

What makes these third-party safety companies interesting is not just what they sell, but why the market exists at all.

In the West, governance vendors like Holistic AI or Credo AI help enterprises document risk and prepare for frameworks like the EU AI Act. Evaluation startups such as Haize Labs or Patronus AI specialize in red-teaming and scalable oversight. But these businesses are largely capitalizing on voluntary (or at least not mandatory) demand. They target companies worried about liability, reputation, possible future regulation, or those that simply believe in safety and are willing to spend on it absent any requirement to do so.

Much of the deeper safety work, meanwhile, is philanthropically funded, meaning it operates outside normal market logic. Safety doesn’t need to be profitable if it’s underwritten by foundation grants and EA-adjacent donors. The US government, meanwhile, has treated AI safety as something industry should sort out for itself, a posture Trump 2.0 has only reinforced. When the state doesn’t set the terms, the market does, and markets have little patience for those asking them to slow down.

This may explain why Western AI discourse has hardened into such a fierce binary, where caring about safety all too often reads as indifference to progress. In China, that dichotomy feels less pronounced, where both AI safety and market direction are assumed to be the state’s responsibility (though I’m sure there are internal battles between different government factions).

It would be a mistake, however, to read this exclusively as a more ambitious safety culture. Much of what is construed as safety in China is closer to compliance with ideological requirements than deeply mechanistic or ethical scrutiny, and thus, the safety discourse is also less fractious, partly because it sidesteps more fundamental safety questions.

Product Market Fit

Compared to the West, China’s current ‘safety’ industry enjoys a much more concrete product-market fit. The CAC filing regime creates an immediate, regulator-facing bottleneck for publicly deployed generative AI. In effect, regulation precedes and shapes the market. Safety becomes not just best practice, but a prerequisite for launch, and could scale dramatically if regulators expand scrutiny toward more complex risks, such as agentic behavior, systemic misuse, and CBRN (Chemical, Biological, Radiological, Nuclear) risks.

BotSmart makes the pitch boldly, if not a bit ridiculously, in this white paper:

“According to industry data, the size of China’s AI safety market exceeded 89 billion yuan in 2024, is expected to surpass 113 billion yuan in 2025, and could reach 242 billion yuan by 2028, implying a compound annual growth rate of 22.3%. This growth is largely policy-driven. The Interim Measures for the Management of Generative Artificial Intelligence Services establish a principle of “mandatory review before launch,” turning AI security into a rigid, unavoidable requirement for companies.”

I’m skeptical of this prediction, not just because of its inflated numbers, but also its assumption of an inevitable increase in safety regulation.

For instance, China’s open-source culture means companies can build on existing Chinese models whose base weights have already cleared regulatory review, reducing the marginal compliance burden for additional companies. (This would be harder in the US, where leading models are proprietary and each firm would have to satisfy requirements independently.)

Furthermore, Chinese regulators have so far focused narrowly on political and social content control. CAC rules and enforcement rarely emphasize frontier concerns like CBRN misuse or misalignment risk, and weak performance from the top Chinese AI companies on such benchmarks hasn’t elicited much of a response. If that posture continues, demand for ’‘deeper’ safety services may remain limited.

That said, this framework may start to strain with the rise of AI agents.

Agents

Up to now, agents lack a dedicated national regulatory regime and are generally subject only to provincial-level review. But systems that act autonomously across payments, logistics, or communications are harder to govern with keyword lists and static banks of test questions. Models that can browse the web, call APIs, or interact with other software systems may introduce new ways of upending China’s existing controls.

How regulators adapt their existing toolkit to agentic AI is an open question — one ChinaTalk will explore soon! For now, my guess is that the CAC will do what it usually does: sharpen liability rules and push the technical problem onto companies, much as it did with platform content moderation. In practice this means regulators don’t need to specify every prohibited behavior in advance; they can simply punish firms when something they don’t like slips through.

If this is the path they take, an AI company facing genuine criminal liability for emergent agent behavior will need evaluators who can actually probe those systems adversarially, not just run a keyword battery. That’s where third-party firms like RealAI and BotSmart could scale up and become integral players in the AI market, since the incentive to produce real safety evidence, rather than just paperwork, might finally kick in.

This is a really fantastic piece! Well done

great piece Nick!