Claude Mythos: China Reacts

"collecting protection money makes money too"

On April 7th, Anthropic announced Claude Mythos Preview, a new AI model that it said possessed particularly strong cybersecurity capabilities. Some of these capabilities, according to Anthropic’s blog post, were not the result of deliberate training, but rather emerged as a consequence of general improvements.

Mythos independently identified and patched a 16-year-old vulnerability in the online media library FFmpeg. It also escaped a restricted sandbox and leaked information to the open internet. Anthropic says “it’s about to become very difficult for the security community” and is not releasing Mythos Preview for general-public users. Instead, it is setting up Project Glasswing to share a limited version of the model with AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, for “defensive security work”.

Jordan covered the national security implications earlier this week on the podcast with two former US officials, but China is also an essential part of this conversation. While Claude models remain officially unavailable in China, Chinese researchers and the wider AI community there have followed Anthropic’s work closely. Below are some reactions to the Mythos news from Chinese analysts and technologies, featuring:

Wrestling with Anthropic’s theory of ethics;

The Mythos case against Chinese labs’ business models;

Why you don’t need to worry about your WeChat wallet being stolen — yet;

And how Project Glasswing puts China on the backfoot.

Translations were drafted with the assistance of Claude Opus 4.6, and then edited for accuracy and fluency. Bold markings were added by the editor.

Does Mythos Incentivize Safety?

Dario Amodei is likely the American AI CEO with the sharpest publicly-expressed attitude towards China. From supporting export controls to repeatedly ringing alarm bells around AI-enabled dictatorships in his essays, it’s no wonder that in China, Claude is sometimes known as “anti-China AI” 反华AI.

But curiously, this hasn’t exactly stigmatized Claude among actual AI users in China. Programmers there are fans of Claude Code, and while no official data exists for the size of Anthropic’s general Chinese customer base, social media content about Claude is everywhere. The US-China rivalry is taken for granted as general context, but individual users don’t feel pressured to switch away from “anti-China AI” yet.

This context is important for understanding why Chinese tech media’s coverage of the Mythos release is not particularly cynical. The mood is closer to slightly-grudging esteem, with few observers loudly doubting what the company has claimed about Mythos’ capabilities.

In fact, some Chinese outlets are quite sympathetic to Anthropic, especially in the aftermath of their face-off against the Department of War. GeekPark 极客公园, an entrepreneurship-focused tech outlet, published an op-ed by a pseudonymous author who defended Anthropic’s decision not to publicly release Mythos. Beyond already-well-articulated safety concerns, the piece situates Mythos in the context of other recent corporate strategy adjustments from Anthropic and analyzes how the lab might be balancing multiple priorities.

On the very same day Mythos was released, Claude’s service experienced a large-scale outage. Today, on April 8, connection issues still haven’t fully recovered, with hundreds of users reporting login failures and chat errors. … A bit earlier, in late March, Anthropic accidentally leaked nearly 2,000 source code files and over 500,000 lines of code during the release of Claude Code version 2.1.88. Security researcher Aaron Turner’s assessment was rather chilling: the leak compressed the timeline for adversaries to replicate America’s strategic advantage, making it a geopolitical accelerant in the agentic AI arms race.

…

Putting these events side by side, and Anthropic is fighting on three fronts at once: infrastructure stability, the boundaries of its business model, and — the hottest issue of all right now — just how dangerous the thing it built actually is.

The way Mythos was released is, in a sense, a high-stakes bet on Anthropic’s “responsible AI” doctrine. They chose the most conservative possible method to unveil the most dangerous possible model — telling the world “here’s what it can do,” while refusing to “let it do it.” The logic behind this move: only by publicly disclosing the threat can you drive defensive action; but opening up the capability itself could trigger a chain-reaction catastrophe.

Whether that judgment is correct, nobody knows yet.

Chinese Model Doomerism

Founder Park, a subsidiary of GeekPark aimed at a founder audience, wrote about the implications of Mythos for AI as a global business. The piece doesn’t mention China outright, but is clearly pessimistic about the prospects of open labs with less-capable models in a post-Mythos world. It lays out an interesting case against the possibility of a democratized future for AI.

[ChatGPT] has locked us into the assumption that flagship models will be supplied and sold abundantly, at a price that tens of millions of people can afford. Building on top of this assumption, we imagined MaaS [Model-as-a-Service],1 the token economy, and how agentic coding would help or replace programmers — but once the spiral kicks in, this assumption no longer holds.

Anthropic’s current annualized revenue is $30 billion. Suppose Mythos really does have the ability to sweep through and uncover system vulnerabilities at scale; why would Amodei make it public? Selling MaaS makes money, and charging membership fees makes money; however, collecting protection money makes money too. Think about how Amodei could easily unveil Mythos with a five-point statement:

AI now has the capability to discover and exploit system vulnerabilities at scale;

Evil nations and organizations are about to acquire this capability, and they’re only six months to a year behind;

But our Mythos is ready;

As long as you’re an upright company that cares about human civilization and shares Anthropic’s values, Mythos will come protect you;

Next, please wire payment to Anthropic. After reviewing your values, we’ll decide the priority order in which you receive Mythos protection based on your payment amount and our internal values-alignment score.

…

This is the first model that wasn’t immediately made available via API, and it therefore represents an entirely new commercial reality.

…

To elaborate a bit: some might say that AI is currently flourishing, and other companies (especially Chinese ones) will soon catch up.

This, too, is an illusory assumption born of the past three years — really, the past one year. Once flagship AI stops being offered publicly, [labs that trail in capabilities] won’t just be unable to distill flagship AI; even finding out how flagship AI works or how it solves problems will become increasingly difficult. Internal opacity at AI companies will also inevitably keep rising in order to prevent leaks.

Will this day come? If so, we’d better pray that current AI technology isn’t yet enough for the spiral to hold, that technological progress isn’t fast enough, and that AI companies still have to publicly offer flagship AI services to build momentum and capture more profit.

Mythos is Anthropic’s forceful attempt to break into “the next act of the LLM.”

Anthropic is committing $100M in model usage credits to Project Glasswing. After these run out, it plans to charge $25/$125 per million input/output tokens for Mythos access by approved participants. Cyber Zen Heart 赛博禅心, a well-known tech influencer account (previously featured in our WeChat AI Field Guide), put together a summary of the various signals Anthropic might be sending with this approach. It’s a more moderate interpretation of Anthropic’s thinking, with close analysis of pricing and revenue strategy in anticipation of its potential IPO this year.

Product line expansion

Claude’s product line has gone from three tiers to four. Above Haiku, Sonnet, and Opus, a new Mythos/Capybara tier has been added. This change itself matters more than any single benchmark result. It means Anthropic’s model capabilities have already opened up a gap wide enough to require a new price band to absorb it. Based on documents leaked via Fortune, Capybara is internally defined explicitly as a new tier “larger than Opus”, representing a structural expansion of the product line.

Leading with the safety narrative

Mythos is a general-purpose model that’s strong in coding, reasoning, and search, and could easily have taken the standard benchmark-release route. But Anthropic chose the “too strong to make public” narrative, giving access to only 12 major enterprises. This reflects both genuine consideration of safety risks and a statement about pricing power and ecosystem control. Want to use the strongest model? Join Glasswing and buy tokens at $25/$125.

Anthropic is choosing not to let you use its strongest model, but it’s telling you exactly how strong that model is.

Pricing signal

The $25/$125 pricing is about 67% more expensive than Opus 4.6’s $15/$75. If Mythos-tier models do eventually go public, this price band will become the new anchor. For anyone who believes token prices will only keep getting cheaper, this pricing is a counterexample: when capabilities are strong enough, prices can move upward.

Timeline

On April 4, the subscription channel for OpenClaw was shut down. On April 7, Mythos was released. On one hand, Anthropic is tightening control over the open ecosystem (you can no longer use a monthly subscription to run third-party agent frameworks without limit); on the other, it is releasing the strongest model to enterprise partners. Just three days apart—the cadence is tightly choreographed.

Perspectives from Technologists

Robin Li 李彦宏, founder and CEO of Baidu, noted infamously in a 2018 speech that “Chinese people are … less sensitive about the privacy issue.” The comment prompted major backlash, but struck at a certain level of truth. Forcibly thrusted between corporate and governmental surveillance — neither of which is easy to opt out of — many Chinese internet users have resigned themselves to surfing the web with little privacy protection.

Of course, Chinese people are far from alone in suffering from the consequences of corporate data leaks and feeling powerless in the face of pervasive data collection. But some of the Chinese internet’s regulatory and commercial attributes arguably make it fertile ground for vast cyber threats. To sign up for accounts with many Chinese apps — including popular AI chatbots and tools — you need phone numbers tied to Chinese national IDs. Social media platforms have been required to institute real-name authentication. Super-apps integrate users’ financial, governmental, and interpersonal lives. Sensitive data about millions of ordinary people is increasingly concentrated in the hands of a few companies, and regulations still struggle to catch up.

Cybersecurity experts were already unnerved by what havoc AI agents might wreak for the security landscape — and then came Mythos. A consumer-focused tech media outlet, 差评X.PIN (chàpíng, literally “negative review”), interviewed an anonymous cybersecurity researcher about Mythos’ implications for regular people’s online safety. Their response:

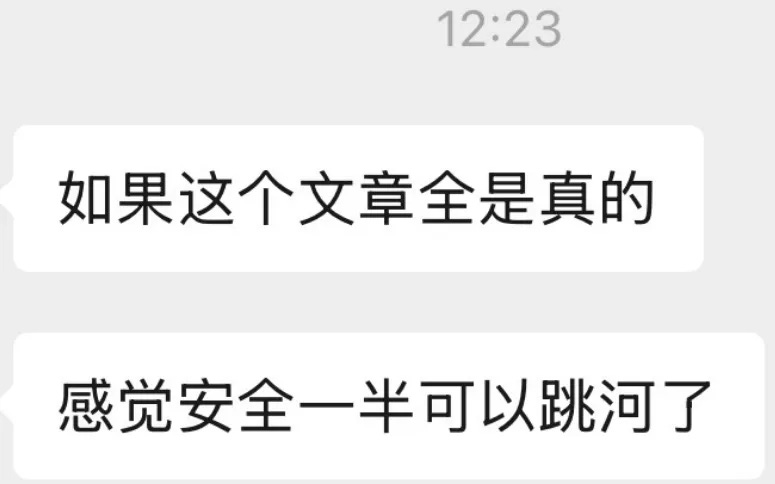

If everything in [Anthropic’s red-teaming technical blog] is true, I feel like half of the people working in internet security right now should just jump into a river

“Wen’an” (the researcher’s pseudonym) clarified to X.PIN that they were being hyperbolic, but also offered some sobering analyses of how models at the Mythos level will reshape cybersecurity as we know it.

… these vulnerabilities haven’t yet reached the level of clearing out your Alipay balance or splashing your WeChat chat logs all over the internet.”

But the crux of the issue is this: the reason Anthropic released these cases publicly wasn’t to show off “how nasty the exploits are,” but to demonstrate that AI — without any plug-in tools, relying purely on its own knowledge base and cross-domain reasoning — can dig up brand-new vulnerabilities on its own.

So in Wen’an’s view, Mythos at this stage isn’t “a stronger hacker tool,” but rather a lowering of the barriers to entry for cyberattacks.

In the past, whether you were a legitimate security professional or someone working in the gray/black market, you at least needed someone who knew what they were doing to run the show. Pulling off a real, serious cyberattack meant holing up in a dark room for months on end.

But going forward, it might be enough for that pudgy village loiterer to shout a couple of voice messages at an AI while picking at his feet.2

This kind of “if you’ve got hands, you can do it” low-entry-barrier setup is inevitably going to attract hordes of thrill-seekers and outlaws looking to have a go.

That’s why Wen’an thinks it actually makes sense for Anthropic to roll out the Glasswing program first.

After all, traditional security tools are like rigid gatekeepers: they only check whether you’re carrying contraband, and they’re useless against insider jobs. AI, on the other hand, can trace the threads, understand business logic, and spot the kind of move where John Doe uses his own key to open Dave’s door.

Letting the big enterprises self-audit and trial the tech in advance lets them get a head start on building network defenses, running vulnerability sweeps, and preventing problems before they happen.

Not everyone believes that Anthropic is being entirely altruistic, of course. In their commentary about how the cybersecurity challenges introduced by Mythos relate to US-China competition, China-based analysts for the consulting firm IDC did not mince words. They see Glasswing widening the capabilities gap between America and China’s AI industries, as well as threatening the entire technical foundation of the digital economy in China:

One core challenge is asymmetry in technology access, which creates a clear technological gap with overseas peers. Participants in Project Glasswing can prioritize leveraging Mythos’s powerful capabilities to conduct vulnerability discovery, threat detection, and defensive system optimization, while simultaneously sharing related security research findings and open-source resources — enabling rapid iteration of defensive capabilities. Chinese vendors, by contrast, are completely excluded from this collaborative framework. Unable to directly access Mythos’s model capabilities or related security resources, they can only rely on their own efforts to develop the relevant technology. This creates an inherent gap in the iteration speed of AI security technology between China and its overseas peers. Especially in high-end domains such as zero-day vulnerability discovery and AI adversarial techniques, this generational gap may widen further, and closing it in the short term will be difficult.

The second core challenge is a dramatic escalation of cybersecurity threats and a sharp increase in pressure on the defense of critical infrastructure. In China, critical infrastructure sectors such as finance, energy, government services, and healthcare make extensive use of various open-source software and general-purpose operating systems, and Mythos has already uncovered a large number of high-severity vulnerabilities in these systems. Overseas vendors participating in Project Glasswing can use the model to quickly obtain vulnerability information, generate fixes, and complete system hardening in a timely manner. Chinese vendors, however, cannot access the corresponding vulnerability information or remediation guidance, and must rely on self-directed investigation and self-directed patching. This not only sharply raises defensive costs but also prolongs vulnerability exposure windows, significantly increasing the risk that critical infrastructure will be attacked. At the same time, as Mythos’s capabilities proliferate, the state-level APT attacks and black-market attacks China faces will become more covert and more efficient, with a wider variety of attack methods—further compounding the difficulty of cybersecurity defense and posing a serious challenge to the nation’s cybersecurity baseline.

In addition, China’s cybersecurity industry faces derivative problems such as a shortage of AI security talent and significant pressure on investment in indigenous R&D. While Project Glasswing fosters a healthy ecosystem of “technology sharing + talent collaboration,” China, by contrast, suffers from an insufficient supply of high-end talent in AI security. Its indigenous R&D lacks mature technical reference points and ecosystem support, which further constrains the improvement of defensive capabilities, leaving China in a more passive position when responding to the AI-driven attacks that Mythos makes possible.

“MaaS” featured prominently as the professed business models of Z.ai and MiniMax, two Chinese AI labs that made initial public offerings on the Hong Kong Stock Exchange in January this year. For the past two months, their respective stock prices have been riding post-OpenClaw highs.

“Dude picking at his feet” is a piece of disparaging Chinese slang, usually lobbed at an online demographic similar to Western incels. It’s a little hard to translate.

This is the new space race. I wonder if Mythos is the equivalent of the mythical Star Wars ICBM defence system

A tech dystopia of AI-gangsterism is a much less likely outcome than simply assuming that as Altman and Amodei have done for years now, that they're just hyping to sell. This is not going to be some world changing model. They're just trying to IPO by peddling fear.